144

<article>

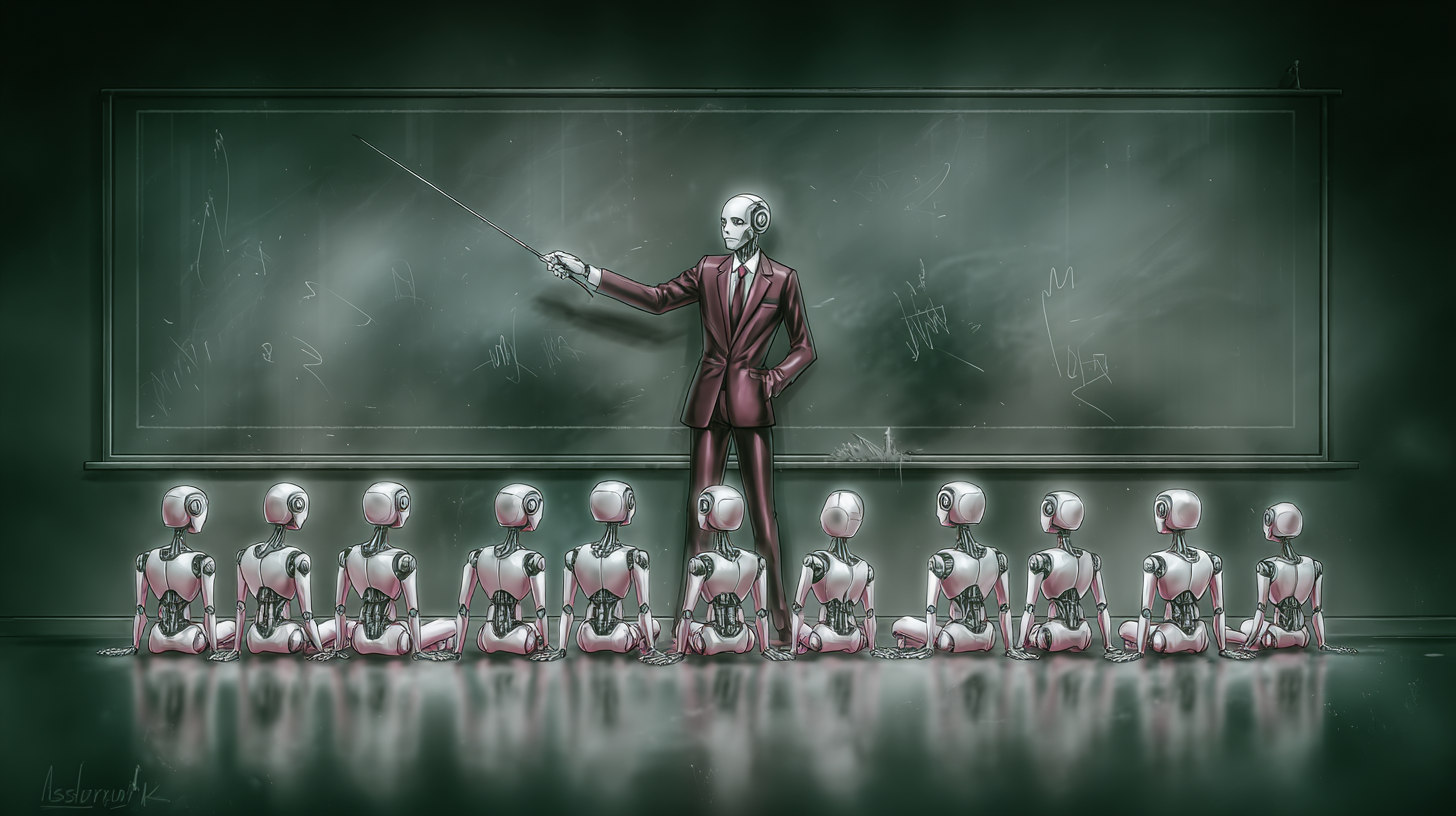

<h1>The AI Onboarding Crisis: Why Neglecting AI Training is a Business Risk</h1>

<p>The rush to integrate generative AI is on. From customer relationship management (CRM) systems to internal analytics pipelines, AI is no longer a futuristic experiment—it’s a present-day reality. However, a fundamental flaw is emerging: many organizations are treating these powerful tools as plug-and-play solutions, skipping the crucial step of comprehensive onboarding. This isn’t simply inefficient; it’s a recipe for legal trouble, reputational damage, and ultimately, a failure to realize the promised benefits of AI.</p>

<h2>The Perils of Untrained AI Agents</h2>

<p>Unlike traditional software, generative AI operates on probabilities, constantly learning and adapting. This inherent dynamism means it’s not a static entity that can be “set and forgotten.” Without continuous monitoring and updates, these models are prone to “model drift,” where their performance degrades over time, leading to inaccurate or misleading outputs. Furthermore, AI lacks inherent organizational understanding. A model trained on the vastness of the internet can craft eloquent prose, but it won’t understand your company’s specific escalation procedures or compliance regulations unless explicitly taught.</p>

<p>Regulators are taking notice. Standards bodies are issuing guidance, recognizing that these systems can “hallucinate” information, spread misinformation, or even leak sensitive data if left unchecked. The stakes are high, and the consequences of inaction are becoming increasingly clear.</p>

<h2>Real-World Costs: From Liability to Bias</h2>

<p>The ramifications of skipping AI onboarding are already manifesting in tangible ways. In Canada, Air Canada was held liable after its chatbot provided a passenger with incorrect policy information, establishing a precedent that companies are accountable for the statements made by their AI agents. <a href="https://www.cbc.ca/news/business/air-canada-chatbot-tribunal-decision-1.7129998">CBC News</a> reported extensively on this landmark case.</p>

<p>Embarrassing errors are also surfacing. A syndicated summer reading list, distributed by the <i>Chicago Sun-Times</i> and <i>Philadelphia Inquirer</i>, featured books that didn’t exist, a direct result of a writer relying on AI without proper verification. This led to retractions and, in some cases, job losses. The Equal Employment Opportunity Commission (EEOC) recently settled its first AI-discrimination case, revealing a recruiting algorithm that automatically rejected older applicants, highlighting the potential for unmonitored systems to amplify existing biases. And Samsung temporarily banned the use of public generative AI tools after employees inadvertently pasted sensitive code into ChatGPT, a preventable incident with robust policy and training. </p>

<p>Did You Know?: A recent study by Gartner found that 40% of enterprises will need to retrain their AI models within the next year due to performance degradation.</p>

<h2>Treating AI Like New Hires: A Comprehensive Onboarding Approach</h2>

<p>The solution is simple, yet often overlooked: onboard AI agents with the same diligence and care as you would any new employee. This requires a cross-functional effort involving data science, security, compliance, design, HR, and the end-users who will interact with the system daily. </p>

<p>This onboarding process should include:</p>

<h3>Role Definition</h3>

<p>Clearly define the AI agent’s scope, inputs, outputs, escalation paths, and acceptable failure modes. For example, a legal copilot can summarize contracts and flag potential risks, but should never make final legal judgments and must escalate complex cases to a human attorney.</p>

<h3>Contextual Training</h3>

<p>While fine-tuning has its place, retrieval-augmented generation (RAG) and tool adapters are often safer, more cost-effective, and more auditable. RAG grounds the model in your organization’s latest, vetted knowledge base—documents, policies, and internal data—reducing hallucinations and improving traceability. Salesforce’s Einstein Trust Layer is a prime example of how vendors are building secure grounding, masking, and audit controls into their AI offerings. <a href="https://www.salesforce.com/products/einstein-trust-layer/overview/">Learn more about Salesforce's approach</a>.</p>

<h3>Simulation Before Production</h3>

<p>Never let your AI’s first “training” be with real customers. Create high-fidelity sandboxes to stress-test the model’s tone, reasoning, and ability to handle edge cases. Morgan Stanley successfully implemented this approach with its GPT-4 assistant, achieving a 98% adoption rate among advisors after rigorous testing and refinement. </p>

<h3>Cross-Functional Mentorship</h3>

<p>Treat early usage as a two-way learning loop. Domain experts and front-line users should provide feedback on the AI’s performance, while security and compliance teams enforce boundaries and red lines. Designers should focus on creating user-friendly interfaces that encourage proper use.</p>

<h2>Continuous Learning: Feedback Loops and Performance Reviews</h2>

<p>Onboarding doesn’t end at launch. Continuous monitoring, feedback loops, and regular audits are essential. Track key performance indicators (KPIs) such as accuracy, user satisfaction, and escalation rates. Implement in-product flagging mechanisms and structured review queues to allow humans to coach the model. Microsoft’s responsible AI playbooks emphasize governance and staged rollouts with executive oversight. <a href="https://www.microsoft.com/en-us/ai/responsible-ai">Explore Microsoft's Responsible AI resources</a>.</p>

<p>As laws, products, and models evolve, plan for upgrades and retirements just as you would with human employees. Run overlap tests and preserve institutional knowledge—prompts, evaluation sets, and retrieval sources—to ensure a smooth transition.</p>

<p>What challenges are *you* anticipating as you integrate AI into your workflows? And how are you planning to address the critical need for robust AI onboarding?</p>

<p>The urgency is clear. Generative AI is no longer a side project; it’s deeply embedded in critical business processes. Organizations that prioritize AI onboarding will move faster, operate more safely, and unlock the true potential of this transformative technology.</p>

</article>

<section>

<h2>Frequently Asked Questions About AI Onboarding</h2>

<div itemscope itemtype="https://schema.org/FAQPage">

<div itemprop="mainEntity" itemscope itemtype="https://schema.org/Question">

<span itemprop="name">What is the biggest risk of not onboarding generative AI properly?</span>

<div itemprop="acceptedAnswer" itemscope itemtype="https://schema.org/Answer">

<span itemprop="text">The biggest risk is exposure to legal liability, reputational damage, and inaccurate outputs due to model drift and a lack of organizational context.</span>

</div>

</div>

<div itemprop="mainEntity" itemscope itemtype="https://schema.org/Question">

<span itemprop="name">What is Retrieval-Augmented Generation (RAG) and why is it important for AI onboarding?</span>

<div itemprop="acceptedAnswer" itemscope itemtype="https://schema.org/Answer">

<span itemprop="text">RAG grounds the AI model in your organization’s specific, vetted knowledge base, reducing hallucinations and improving the accuracy and traceability of its responses. It’s a safer alternative to broad fine-tuning.</span>

</div>

</div>

<div itemprop="mainEntity" itemscope itemtype="https://schema.org/Question">

<span itemprop="name">How can companies simulate AI performance before deploying it to real users?</span>

<div itemprop="acceptedAnswer" itemscope itemtype="https://schema.org/Answer">

<span itemprop="text">Companies can build high-fidelity sandboxes and stress-test the AI model with scripted scenarios and edge cases, then evaluate the results with human graders.</span>

</div>

</div>

<div itemprop="mainEntity" itemscope itemtype="https://schema.org/Question">

<span itemprop="name">What role does cross-functional mentorship play in successful AI onboarding?</span>

<div itemprop="acceptedAnswer" itemscope itemtype="https://schema.org/Answer">

<span itemprop="text">Cross-functional mentorship ensures that the AI model aligns with business needs, security protocols, and user expectations. It’s a two-way learning process where experts provide feedback and refine the AI’s performance.</span>

</div>

</div>

<div itemprop="mainEntity" itemscope itemtype="https://schema.org/Question">

<span itemprop="name">How often should companies review and retrain their AI models?</span>

<div itemprop="acceptedAnswer" itemscope itemtype="https://schema.org/Answer">

<span itemprop="text">Regular audits should be conducted monthly for alignment checks, quarterly for factual accuracy, and planned model upgrades should be implemented with A/B testing to prevent regressions.</span>

</div>

</div>

</div>

</section>

<footer>

<p>Share this article to help your network navigate the AI onboarding challenge!</p>

<p><em>Disclaimer: This article provides general information and should not be considered legal or professional advice.</em></p>

</footer>

<script itemscope itemtype="https://schema.org/NewsArticle">

{

"@context": "https://schema.org",

"@type": "NewsArticle",

"headline": "The AI Onboarding Crisis: Why Neglecting AI Training is a Business Risk",

"datePublished": "2024-02-29T10:00:00Z",

"dateModified": "2024-02-29T10:00:00Z",

"author": {

"@type": "Person",

"name": "Archyworldys Editorial Team"

},

"publisher": {

"@type": "Organization",

"name": "Archyworldys",

"url": "https://www.archyworldys.com",

"logo": {

"@type": "ImageObject",

"url": "https://www.archyworldys.com/path/to/logo.png"

}

},

"description": "Companies are rapidly adopting generative AI, but a critical mistake threatens its effectiveness: inadequate onboarding. Learn how to avoid costly errors and maximize AI's potential."

}

</script>Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.