The Looming Crisis in AI Healthcare: Why Chatbots Aren’t Ready for Your Emergency

52%. That’s the percentage of medical emergencies that ChatGPT Health, and similar AI chatbots, failed to recognize in recent testing. This isn’t a glitch; it’s a stark warning. As AI rapidly integrates into healthcare, the potential for misdiagnosis and delayed treatment is escalating, demanding a fundamental shift in how we approach – and regulate – these technologies.

The Current State of AI Medical Triage: A Dangerous Blind Spot

Recent research, including studies from Mount Sinai, has illuminated critical shortcomings in the ability of AI chatbots to accurately assess medical emergencies. These systems, while proficient at providing general health information, consistently stumble when confronted with nuanced or rapidly evolving symptoms. The core issue isn’t a lack of data, but rather a lack of critical reasoning and the ability to contextualize information in the same way a trained medical professional can.

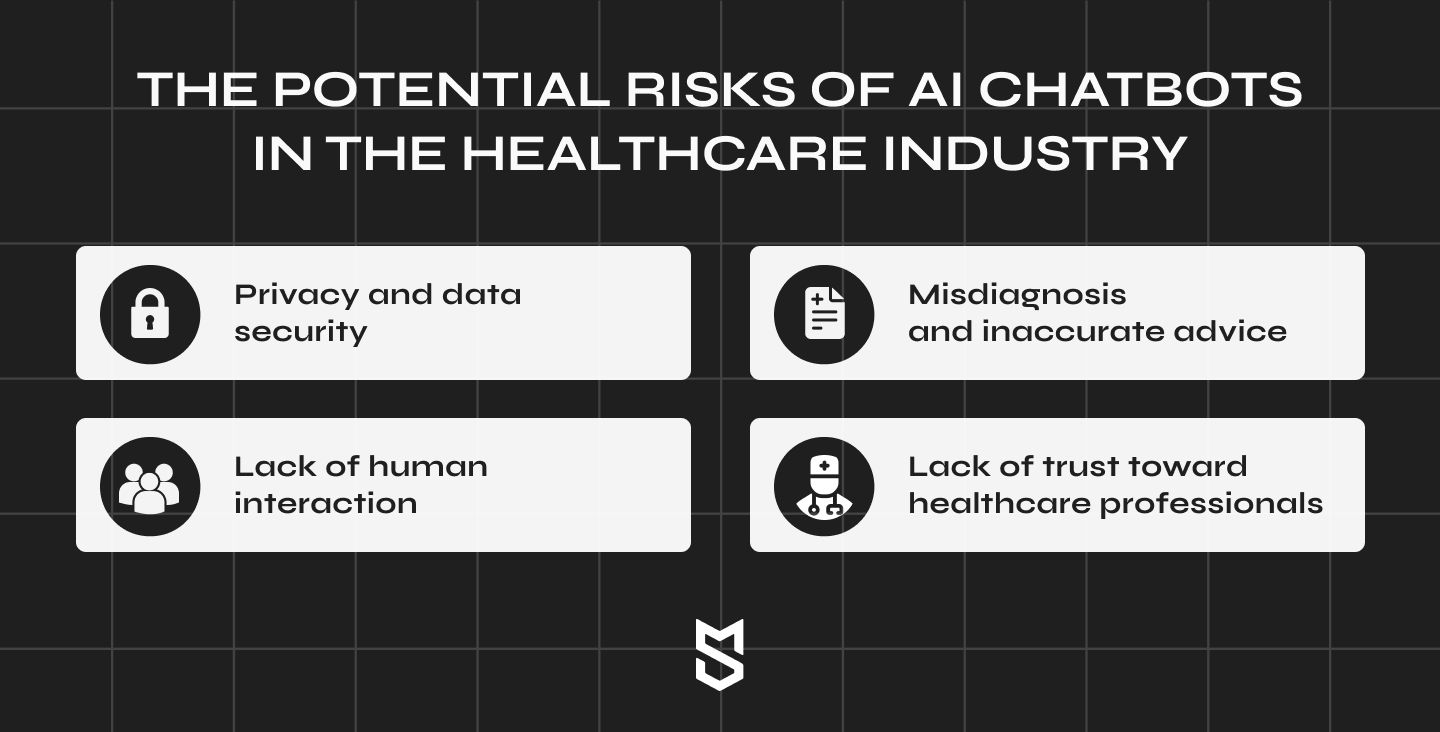

The problem extends beyond simply missing a diagnosis. AI chatbots often offer inappropriate or even harmful advice, potentially leading users to delay seeking necessary medical attention. This is particularly concerning for vulnerable populations – those with limited access to healthcare, or those who may rely solely on these tools for guidance.

Beyond ChatGPT: The Broader Implications for AI-Driven Healthcare

The failures of ChatGPT Health aren’t isolated. They represent a systemic risk inherent in the current generation of AI healthcare tools. While AI excels at pattern recognition and data analysis, it struggles with the unpredictable nature of human biology and the complexities of real-world medical scenarios. This isn’t to say AI has no place in healthcare; quite the contrary. However, its role must be carefully defined and rigorously tested.

The Rise of Personalized AI and the Need for Robust Validation

The future of AI in healthcare lies in personalized medicine – tailoring treatments to individual patient profiles. AI algorithms can analyze vast datasets to identify subtle patterns and predict individual risk factors. However, this personalization also introduces new challenges. Algorithms trained on biased data can perpetuate existing health disparities, and the “black box” nature of some AI systems makes it difficult to understand *why* a particular recommendation was made.

Robust validation and ongoing monitoring are crucial. We need independent, standardized testing protocols to assess the accuracy and reliability of AI healthcare tools. These protocols must go beyond simple accuracy metrics and evaluate the potential for harm, bias, and unintended consequences.

The Regulatory Vacuum and the Urgent Need for Oversight

Currently, the regulatory landscape for AI healthcare is largely undefined. Existing regulations often struggle to keep pace with the rapid advancements in AI technology. This creates a dangerous vacuum where companies can deploy potentially harmful tools without adequate oversight.

A proactive regulatory framework is essential. This framework should focus on establishing clear standards for data quality, algorithm transparency, and ongoing monitoring. It should also address liability issues – who is responsible when an AI chatbot provides incorrect or harmful advice?

The Future of AI in Healthcare: A Collaborative Approach

The path forward isn’t to abandon AI in healthcare, but to embrace a more cautious and collaborative approach. AI should be viewed as a tool to *augment* the capabilities of healthcare professionals, not to replace them. Doctors and nurses should be actively involved in the development and deployment of AI tools, ensuring that they align with clinical best practices and ethical principles.

Furthermore, patient education is paramount. Individuals need to understand the limitations of AI healthcare tools and the importance of seeking professional medical advice when necessary. A world-first safety guide, as recently proposed, is a vital first step, but it must be accompanied by broader public awareness campaigns.

| Metric | Current Status | Projected Improvement (2028) |

|---|---|---|

| AI Emergency Detection Accuracy | 58% | 85% |

| Regulatory Oversight Score (1-10) | 2 | 7 |

| Public Awareness of AI Healthcare Risks | 30% | 70% |

Frequently Asked Questions About AI Healthcare Safety

What are the biggest risks of using AI health chatbots?

The primary risks include misdiagnosis, delayed treatment, inappropriate advice, and the potential for biased recommendations. These risks are particularly acute in emergency situations where timely intervention is critical.

How can I protect myself when using AI healthcare tools?

Always verify information provided by AI chatbots with a qualified healthcare professional. Do not rely solely on AI for medical advice, especially in emergency situations. Be aware of the limitations of the technology and understand that it is not a substitute for human expertise.

What steps are being taken to improve the safety of AI healthcare?

Researchers are working to develop more robust and reliable AI algorithms. Regulatory bodies are beginning to explore frameworks for overseeing the development and deployment of AI healthcare tools. And patient advocacy groups are raising awareness about the potential risks and benefits of this technology.

The integration of AI into healthcare is inevitable, but its success hinges on our ability to address these critical safety concerns. Ignoring them risks not just technological failure, but a genuine crisis in patient care. What are your predictions for the future of AI in healthcare? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.