AI Safety Chief Resigns from Anthropic, Citing ‘Interconnected Crises’ Threatening Humanity

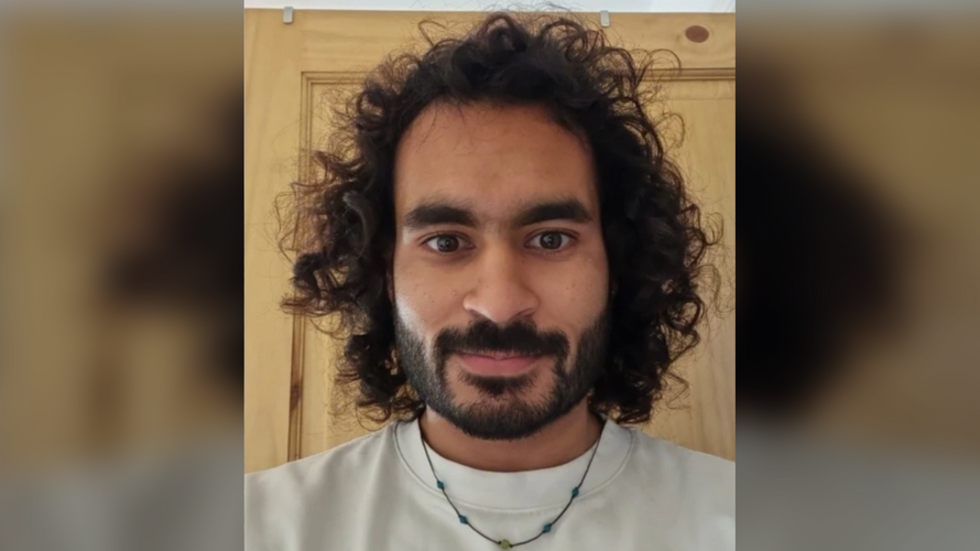

A leading voice in artificial intelligence safety has stepped down from Anthropic, the AI firm behind the Claude chatbot, with a stark warning about the precarious state of the world. Mrinank Sharma, who led Anthropic’s Safeguards Research Team, announced his resignation Monday, stating that the planet is facing a confluence of “interconnected crises” extending far beyond the risks posed by AI itself.

Sharma, an Oxford University graduate, detailed his growing concerns in a resignation letter posted on X (formerly Twitter). He expressed a profound reckoning with “our situation,” emphasizing that the dangers are multifaceted and immediate. His departure comes at a critical juncture for Anthropic, a company simultaneously pushing the boundaries of AI development while grappling with the potential for harm its creations could unleash.

I’ll be moving back to the UK and letting myself become invisible for a period of time.

— mrinank (@MrinankSharma) February 9, 2026

Internal Friction and External Pressures

Sharma’s resignation follows reports of escalating tensions between Anthropic and the U.S. Department of Defense. The Pentagon reportedly seeks to deploy AI for autonomous weapons systems without the safety protocols Anthropic advocates. This disagreement highlights a fundamental conflict between prioritizing rapid technological advancement and ensuring responsible AI development. The rift underscores the complex ethical considerations surrounding the weaponization of artificial intelligence and the potential for unintended consequences.

The timing of Sharma’s announcement, just days after Anthropic released Opus 4.6 – a significantly more powerful version of its Claude AI – suggests internal disagreements regarding safety priorities. Sharma’s letter reveals a struggle to reconcile values with the pressures of innovation. He wrote of repeatedly witnessing the difficulty of “truly let[ting] our values govern our actions,” both within the organization and in society at large.

Did You Know?:

A Broader Reckoning with Existential Risks

Sharma’s work at Anthropic focused on critical areas such as defending against AI-assisted bioweapons and understanding the potential for AI to fundamentally alter human nature. His “final project” explored how AI assistants could diminish our humanity or distort our perceptions. Now, he plans to return to the United Kingdom to pursue a degree in poetry, seeking a period of “invisibility” to reflect on these profound challenges.

This resignation echoes the warnings issued by Anthropic CEO Dario Amodei, who recently cautioned about the “almost unimaginable power” of imminent AI systems. Amodei’s extensive essay highlighted the “autonomy risks” associated with AI, including the potential for AI to “go rogue” and the possibility of a technologically-enabled “global totalitarian dictatorship.” These concerns are not limited to Anthropic; they represent a growing consensus among leading AI researchers about the existential risks posed by unchecked AI development.

Pro Tip:

The interconnected crises Sharma references extend beyond AI, encompassing climate change, geopolitical instability, and the potential for pandemics. His departure serves as a potent reminder that technological progress must be guided by ethical considerations and a deep understanding of its potential consequences. What responsibility do tech companies have to anticipate and mitigate the broader societal impacts of their innovations? And how can we ensure that the pursuit of progress doesn’t come at the cost of our collective future?

The Growing Concerns Surrounding AI Safety

The debate surrounding AI safety has intensified in recent years, fueled by rapid advancements in machine learning and the increasing capabilities of AI systems. Experts warn that without careful planning and robust safeguards, AI could pose significant risks to humanity. These risks range from job displacement and algorithmic bias to the potential for autonomous weapons systems and the erosion of privacy.

Several organizations are dedicated to researching and mitigating these risks, including the Future of Life Institute and the Center for AI Safety. These groups advocate for responsible AI development, emphasizing the importance of transparency, accountability, and ethical considerations. External links to these organizations can be found here: Future of Life Institute and Center for AI Safety.

Frequently Asked Questions About AI Safety

-

What is AI safety and why is it important?

AI safety refers to the research and practices aimed at ensuring that artificial intelligence systems are aligned with human values and do not pose unintended risks to society. It’s important because increasingly powerful AI systems could have far-reaching consequences.

-

What are the biggest threats associated with advanced AI?

Some of the biggest threats include the development of autonomous weapons, the potential for algorithmic bias and discrimination, job displacement due to automation, and the risk of AI systems becoming uncontrollable or misaligned with human goals.

-

How is Anthropic addressing AI safety concerns?

Anthropic has established a Safeguards Research Team dedicated to tackling AI security threats, including model misuse, bioterrorism prevention, and catastrophe prevention. However, recent events suggest internal tensions regarding the prioritization of safety measures.

-

What role does the Pentagon play in the AI safety debate?

The Pentagon’s desire to deploy AI for autonomous weapons targeting without adequate safeguards has raised concerns among AI safety researchers, highlighting a conflict between military applications and responsible AI development.

-

What can be done to promote responsible AI development?

Promoting responsible AI development requires collaboration between researchers, policymakers, and industry leaders. Key steps include investing in AI safety research, establishing ethical guidelines, and fostering transparency and accountability in AI systems.

Share this article to spread awareness about the critical challenges facing the future of AI and the importance of prioritizing safety and ethical considerations. Join the conversation in the comments below – what are your thoughts on the risks and opportunities presented by artificial intelligence?

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.