Nearly 70% of autistic adults report feeling misunderstood, a statistic often linked to societal expectations surrounding emotional expression. But what if the problem isn’t a lack of emotion, but a difference in how emotion is displayed? Groundbreaking research is now demonstrating that autistic individuals process and express emotions through facial movements in distinct ways, challenging long-held assumptions and opening doors to a more nuanced understanding of neurodiversity. This isn’t simply a matter of academic curiosity; it’s a paradigm shift with the potential to revolutionize fields from artificial intelligence to mental healthcare.

The Subtle Language of Autistic Facial Expression

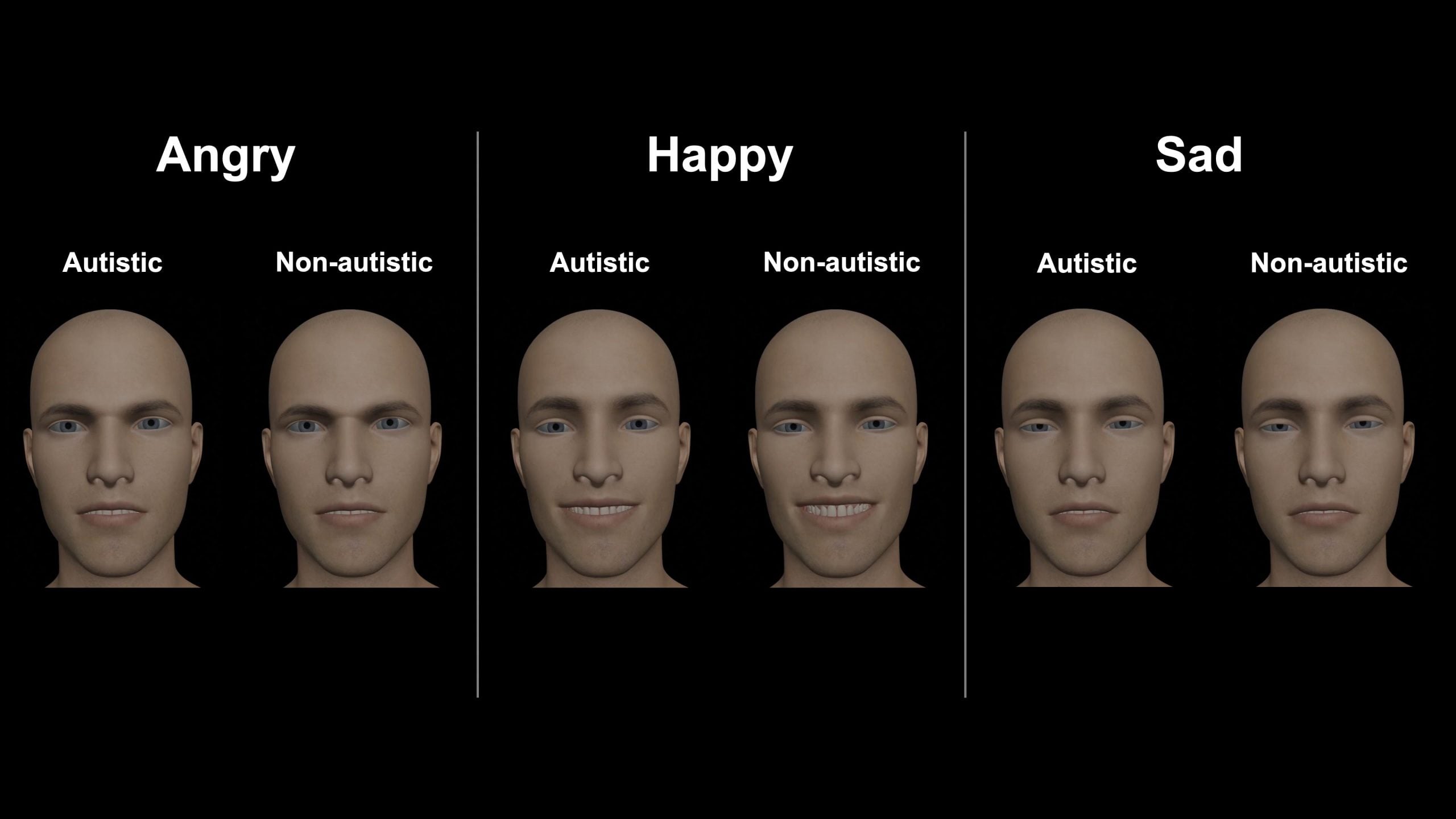

For decades, the prevailing view held that autistic individuals exhibited reduced emotional expression – a “flat affect.” However, recent studies, including those highlighted by SciTechDaily, News-Medical, the Daily Mail, and ScienceDaily, paint a far more complex picture. Researchers are discovering that while autistic individuals may not utilize the same intensity or pattern of facial muscle movements as neurotypical individuals, they absolutely do experience and express emotions. The difference lies in the subtle choreography of facial muscles – the micro-expressions and nuanced shifts that often go unnoticed by the casual observer.

This isn’t about masking or suppressing feelings. Instead, the research suggests that the neural pathways governing facial expression are wired differently in autistic brains. The amygdala, often associated with emotional processing, and the facial motor cortex, responsible for controlling facial muscles, may operate with different levels of synchronization. This leads to a more individualized and less conventionally “readable” emotional display.

Decoding the Differences: What the Research Shows

Scientists are employing advanced facial coding systems and machine learning algorithms to analyze the subtle differences in facial expressions between autistic and neurotypical individuals. These systems can detect minute muscle movements that are invisible to the naked eye. Initial findings indicate that autistic individuals may exhibit:

- Reduced intensity in certain facial muscle movements.

- Different timing and sequencing of muscle activations.

- A greater reliance on other forms of emotional communication, such as body language and vocal tone.

It’s crucial to emphasize that these differences are not deficits. They represent a different way of communicating emotion, one that deserves recognition and respect.

The Future of AI: Building Emotionally Intelligent Systems

The implications of this research extend far beyond the realm of autism understanding. The current generation of artificial intelligence, particularly in the field of affective computing (AI that recognizes and responds to human emotions), is largely trained on datasets comprised primarily of neurotypical facial expressions. This creates a significant bias, rendering these systems less accurate and potentially discriminatory when interacting with autistic individuals.

Imagine a future where AI-powered mental health chatbots can accurately interpret the emotional state of all users, regardless of neurotype. Or a virtual assistant that doesn’t misinterpret a neutral facial expression as disinterest. To achieve this, we need to diversify the datasets used to train AI, incorporating a wider range of emotional expressions, including those commonly observed in autistic individuals. This requires a concerted effort to collect and annotate data from diverse populations, ensuring that AI systems are truly inclusive and equitable.

Transforming Mental Healthcare: Beyond the “Reading Faces” Paradigm

Historically, mental health diagnosis has relied heavily on the clinician’s ability to “read” a patient’s facial expressions. However, if we acknowledge that autistic individuals express emotions differently, this diagnostic approach becomes inherently flawed. Misinterpretations can lead to misdiagnosis, inappropriate treatment, and a perpetuation of harmful stereotypes.

The future of mental healthcare lies in adopting a more holistic and individualized approach. This includes:

- Utilizing a wider range of assessment tools, beyond facial expression analysis.

- Prioritizing self-reporting and subjective experiences.

- Providing training for clinicians on neurodiversity and the nuances of autistic communication.

Furthermore, the research into autistic facial expressions could lead to the development of new diagnostic tools that are specifically designed to identify emotional states in neurodivergent individuals.

| Area | Current State | Projected Future (2030) |

|---|---|---|

| AI Emotional Recognition Accuracy (Autistic Individuals) | 65% | 85% |

| Clinician Training on Neurodiversity | 20% of Professionals Trained | 75% of Professionals Trained |

| Diagnostic Misinterpretation Rate (Autistic Individuals) | 30% | 10% |

Embracing Neurodiversity: A More Empathetic Future

Ultimately, this research is a powerful reminder that neurodiversity is not a pathology to be cured, but a natural variation in human experience. By recognizing and respecting the different ways in which autistic individuals express emotions, we can create a more inclusive and empathetic society. **Understanding autistic facial expressions** is not just about improving AI or refining diagnostic practices; it’s about fostering a world where everyone feels seen, heard, and understood.

Frequently Asked Questions About Autistic Emotional Expression

What does this research mean for autistic individuals who feel they are often misunderstood?

This research validates the experiences of many autistic individuals who feel their emotions are often misinterpreted. It highlights that differences in emotional expression are not a sign of lacking emotion, but rather a different way of processing and displaying it.

How can I become more aware of the nuances of autistic emotional expression?

Educate yourself! Read articles and books written by autistic individuals, listen to their perspectives, and challenge your own assumptions about emotional expression. Focus on listening to what people say, rather than solely relying on facial cues.

Will this research lead to a change in the diagnostic criteria for autism?

It’s possible. The research is prompting a re-evaluation of current diagnostic practices and a move towards more holistic and individualized assessments. A greater emphasis on self-reporting and a reduced reliance on “reading faces” are likely outcomes.

What role can technology play in bridging the communication gap between autistic and neurotypical individuals?

Technology can play a significant role by developing tools that translate emotional cues across different neurotypes. This could include AI-powered communication aids or virtual reality simulations that help neurotypical individuals experience the world from an autistic perspective.

What are your predictions for the impact of this research on societal perceptions of autism? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.