AWS Elemental Inference: AI-Powered Video Transformation for the Mobile Era

Amazon Web Services (AWS) today announced the launch of AWS Elemental Inference, a fully managed artificial intelligence (AI) service designed to dramatically improve the reach and engagement of live and on-demand video broadcasts. This innovative service automatically transforms video content, optimizing it for the diverse viewing habits of today’s audiences, particularly on mobile and social platforms.

For broadcasters and streamers, reaching viewers on platforms like TikTok, Instagram Reels, and YouTube Shorts often requires significant manual effort. AWS Elemental Inference eliminates this bottleneck, enabling content creators to deliver optimized video experiences without needing specialized AI expertise or extensive post-production workflows.

The shift in how people consume video is undeniable. While traditional broadcasts remain largely in landscape format, the majority of viewers, especially younger demographics, are increasingly engaging with content on mobile devices in vertical orientation. Manually adapting broadcasts for these platforms is time-consuming, often leading to missed opportunities to capitalize on viral moments and a loss of audience to mobile-first competitors.

Unlocking Seamless Video Adaptation with AWS Elemental Inference

AWS Elemental Inference offers flexible deployment options to integrate seamlessly into existing video workflows. Users can choose to manage video transformations through a dedicated standalone console or directly within their AWS Elemental MediaLive channels. This dual approach provides broadcasters with the flexibility to adopt the solution that best suits their current infrastructure and operational needs.

Getting Started: The Standalone Console

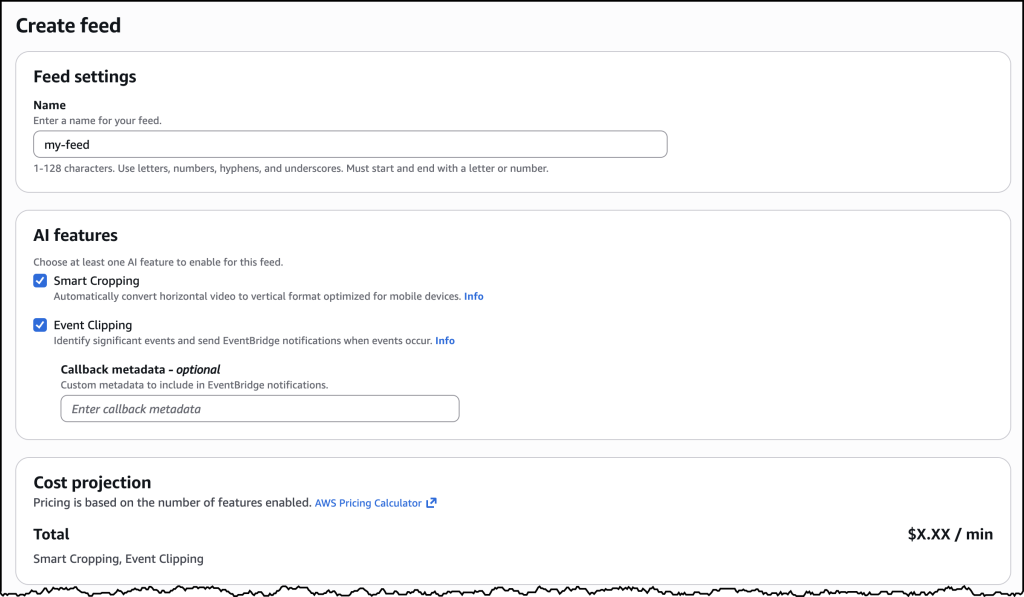

To begin using AWS Elemental Inference, navigate to the AWS Management Console and select AWS Elemental Inference. From the dashboard, initiate the process by choosing Create feed. This establishes the core resource for your AI-powered video processing, which transitions from a ‘CREATING’ to an ‘AVAILABLE’ state once configured.

Within the console, you can configure outputs for either vertical video cropping or automated clip generation. For vertical cropping, simply start with an empty feed, and the service intelligently manages cropping parameters based on your video specifications. To enable clip generation, add an output, name it (e.g., “highlight-clips”), select “Clipping” as the output type, and set the status to “ENABLED”.

Integrating with AWS Elemental MediaLive

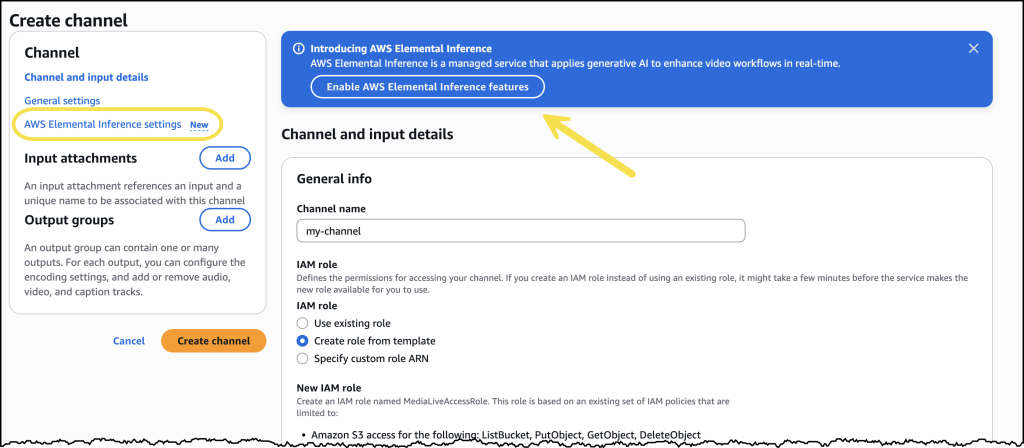

Alternatively, AWS Elemental Inference can be directly integrated into existing AWS Elemental MediaLive channel configurations. This approach allows broadcasters to add AI capabilities to their live video workflows without requiring architectural changes. By enabling the desired features within the channel setup, AWS Elemental Inference operates in parallel with video encoding, maximizing efficiency.

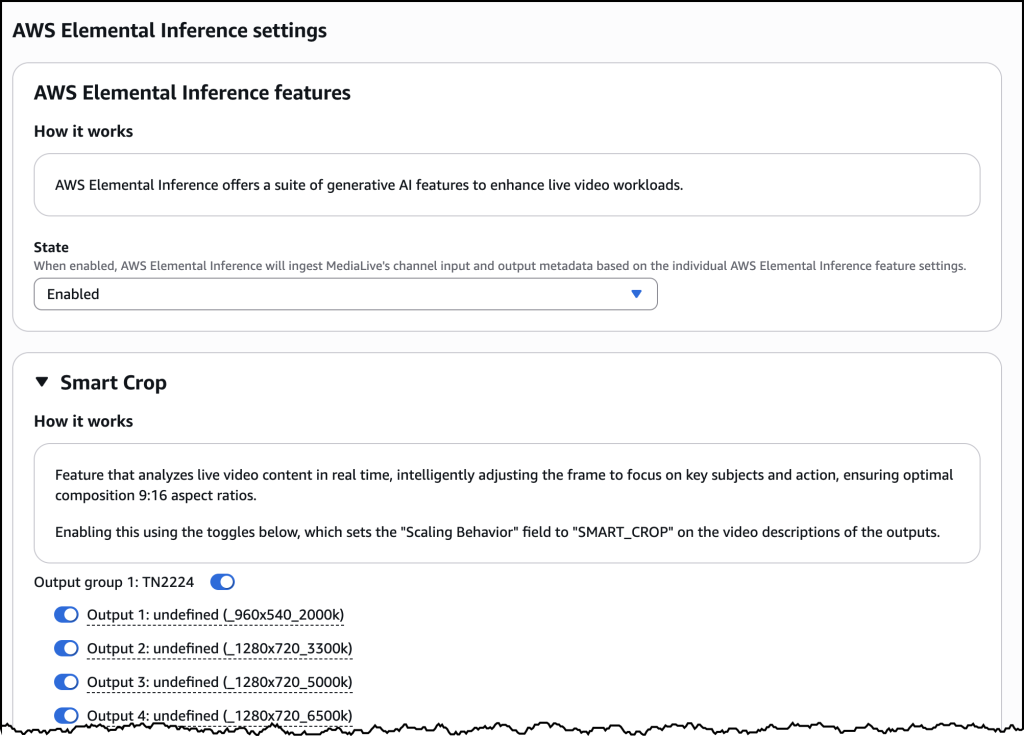

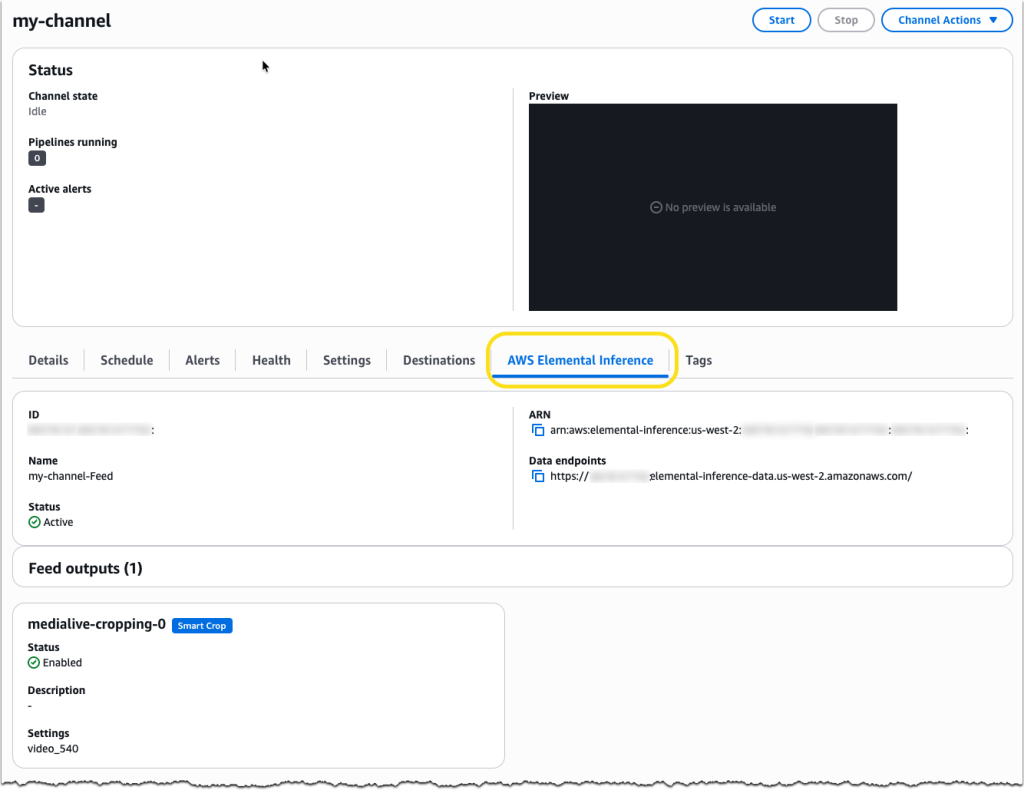

Once enabled, Smart Crop can be configured with outputs for various resolution specifications within an Output group. AWS Elemental MediaLive now features a dedicated AWS Elemental Inference tab on the channel details page, providing a centralized view of your AI-powered video transformation configuration, including the service’s Amazon Resource Name (ARN), data endpoints, and feed output details.

How Does AWS Elemental Inference Work?

At its core, AWS Elemental Inference utilizes an agentic AI application that analyzes video in real-time, automatically applying the optimal optimizations at the most impactful moments. Vertical video cropping and clip generation are handled independently, executing multi-step transformations without requiring human intervention. This allows for efficient value extraction from live and on-demand content.

The service analyzes video and applies AI capabilities autonomously, freeing up production teams to focus on content quality. AWS Elemental Inference achieves a remarkably low latency of 6-10 seconds – a significant improvement over the minutes required by traditional post-processing methods. This “process once, optimize everywhere” approach allows multiple AI features to run simultaneously on a single video stream, eliminating the need for repetitive reprocessing.

Seamless integration with AWS Elemental MediaLive ensures that AI features can be enabled without disrupting existing video architectures. Furthermore, AWS Elemental Inference leverages fully managed foundation models (FMs) that are automatically updated and optimized, removing the need for dedicated AI teams or specialized expertise.

Key Features at Launch

- Vertical Video Creation: AI-powered cropping intelligently transforms landscape broadcasts into vertical formats (9:16 aspect ratio) optimized for social and mobile platforms. The service tracks subjects and maintains broadcast quality while automatically reformatting content for mobile viewing.

- Clip Generation with Advanced Metadata Analysis: Automatically detects and extracts clips from live content, highlighting key moments for real-time distribution. This significantly reduces manual editing time, particularly for live sports broadcasts.

AWS plans to introduce additional features and capabilities throughout the year, including tighter integration with core AWS Elemental services and features designed to help customers monetize their video content.

Availability and Pricing

AWS Elemental Inference is available now in four AWS Regions: US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). It can be enabled through the AWS Elemental MediaLive console or integrated into workflows using the AWS Elemental MediaLive APIs.

Pricing is consumption-based, meaning you only pay for the features you use and the video you process, with no upfront costs or long-term commitments. This flexible model allows you to scale resources during peak events and optimize costs during quieter periods.

To learn more about AWS Elemental Inference, visit the AWS Elemental Inference product page. For detailed technical implementation information, refer to the AWS Elemental Inference documentation.

As video consumption continues to evolve, how will broadcasters adapt to meet the demands of a mobile-first audience? And what new creative opportunities will AI-powered video transformation unlock for content creators?

Frequently Asked Questions

What is AWS Elemental Inference?

AWS Elemental Inference is a fully managed AI service that automatically transforms and optimizes live and on-demand video broadcasts for various platforms and devices, particularly mobile.

How does AWS Elemental Inference help with vertical video?

AWS Elemental Inference uses AI-powered cropping to intelligently transform landscape video into vertical formats (9:16), ensuring key action remains visible and maintaining broadcast quality.

Can I use AWS Elemental Inference with my existing video workflow?

Yes, AWS Elemental Inference offers flexible deployment options, including integration with AWS Elemental MediaLive, allowing you to add AI capabilities without modifying your current architecture.

What are the benefits of using AI for video processing?

AI-powered video processing automates tasks like cropping and clip generation, reducing manual effort, accelerating time-to-market, and improving audience engagement.

Is AWS Elemental Inference available in all AWS Regions?

Currently, AWS Elemental Inference is available in US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). AWS is continually expanding regional availability.

Disclaimer: This article provides information for general knowledge and informational purposes only, and does not constitute professional advice.

Share this article with your network and join the conversation in the comments below! What are your biggest challenges in adapting video content for mobile platforms?

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.