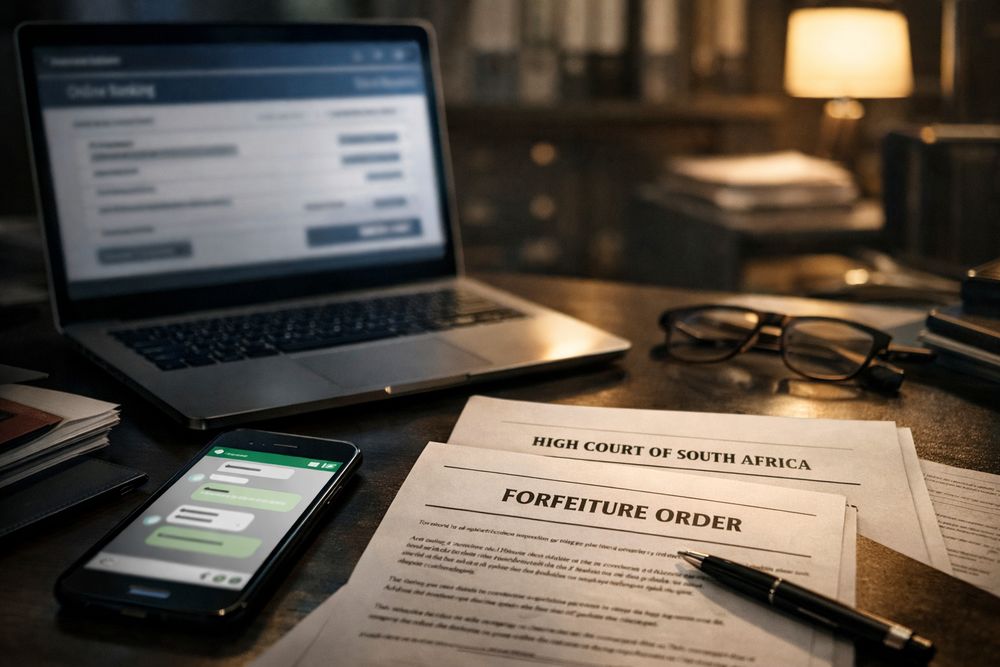

Over R33 million was nearly lost to sophisticated fraud targeting a single South African financial institution, Foschini, in recent weeks. While the Asset Forfeiture Unit (AFU) successfully recovered R11 million, the incident isn’t just a win for law enforcement; it’s a stark warning. The incident, initially triggered by a WhatsApp scam impersonating a senior executive, highlights a rapidly escalating threat: the convergence of social engineering and readily available technology. This isn’t about phishing emails anymore; it’s about social engineering weaponized by AI, and the future of corporate security depends on understanding this shift.

The Anatomy of a Modern Scam: Beyond the Phishing Email

The Foschini case, as reported by IOL, News24, and Bizcommunity, demonstrates a level of sophistication previously unseen in many fraud attempts. Scammers didn’t rely on mass-mailed emails; they targeted a senior accountant directly via WhatsApp, a platform perceived as more trustworthy and personal. By convincingly impersonating a high-ranking executive, they manipulated the employee into authorizing fraudulent transactions totaling over R22 million. The AFU’s swift action is commendable, but the fact that R11 million was initially lost underscores the vulnerability of even established organizations.

Why WhatsApp? The Illusion of Authenticity

WhatsApp’s end-to-end encryption and widespread adoption contribute to a false sense of security. Employees are more likely to engage with messages from colleagues on WhatsApp than they are with unsolicited emails. Scammers exploit this trust, leveraging readily available profile pictures and contact information to create believable personas. Furthermore, the platform’s informal nature can lower an employee’s guard, making them less likely to scrutinize requests as carefully as they would in a formal email exchange.

The AI Inflection Point: Scaling Social Engineering

While the Foschini scam relied on human deception, the future of this type of fraud lies in artificial intelligence. AI-powered tools are already capable of:

- Deepfake Voice Cloning: Creating realistic audio impersonations of executives, making fraudulent requests even more convincing.

- Hyper-Personalized Messaging: Analyzing publicly available data (LinkedIn, social media) to craft highly targeted and believable messages.

- Automated Social Engineering Campaigns: Scaling attacks to target hundreds or thousands of employees simultaneously.

- Real-Time Language Translation: Overcoming language barriers and expanding the reach of scams globally.

These technologies dramatically lower the barrier to entry for fraudsters, allowing them to execute more sophisticated and scalable attacks. The Foschini case is likely a precursor to a wave of AI-driven social engineering attacks targeting businesses of all sizes.

Building a Human Firewall: Proactive Defense Strategies

Traditional security measures – firewalls, antivirus software – are insufficient to combat AI-powered social engineering. The focus must shift to building a “human firewall” through comprehensive employee training and robust internal controls.

Key Strategies for Mitigation:

Multi-Factor Authentication (MFA): Implementing MFA for all critical systems adds an extra layer of security, even if an employee’s credentials are compromised.

Verification Protocols: Establishing clear protocols for verifying requests, especially those involving financial transactions. This includes requiring out-of-band verification (e.g., a phone call to confirm a request).

Regular Security Awareness Training: Educating employees about the latest social engineering tactics, including those leveraging AI. Simulated phishing exercises can help identify vulnerabilities and reinforce best practices.

Zero Trust Architecture: Adopting a zero-trust security model, which assumes that no user or device is inherently trustworthy, regardless of location or network access.

| Security Measure | Estimated Cost Reduction in Fraud Losses |

|---|---|

| Multi-Factor Authentication | Up to 99.9% |

| Regular Security Awareness Training | 20-40% |

| Zero Trust Architecture | 15-30% |

The Future Landscape: Constant Vigilance and Adaptive Security

The threat landscape is constantly evolving, and businesses must adopt a proactive and adaptive security posture. This means continuously monitoring for new threats, updating security protocols, and investing in employee training. The Foschini incident serves as a critical reminder that even seemingly secure organizations are vulnerable to sophisticated attacks. The age of relying solely on technological defenses is over; the future of security lies in empowering employees to become the first line of defense against increasingly intelligent adversaries.

Frequently Asked Questions About AI-Powered Social Engineering

Q: How can I tell if a WhatsApp message is from a scammer?

A: Be wary of urgent requests, especially those involving financial transactions. Verify the sender’s identity through a separate channel (e.g., a phone call) and scrutinize the message for grammatical errors or inconsistencies.

Q: What role does AI play in making these scams more effective?

A: AI enables scammers to personalize attacks, automate campaigns, and create convincing deepfakes, making it harder to detect fraudulent activity.

Q: Is my business at risk, even if it’s small?

A: Absolutely. Small businesses are often targeted because they have fewer security resources. Implementing basic security measures, such as MFA and employee training, can significantly reduce your risk.

What are your predictions for the evolution of social engineering tactics in the next year? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.