The Ghost in the Machine: AI, Intellectual Property, and the Looming Crisis of Stolen Voice

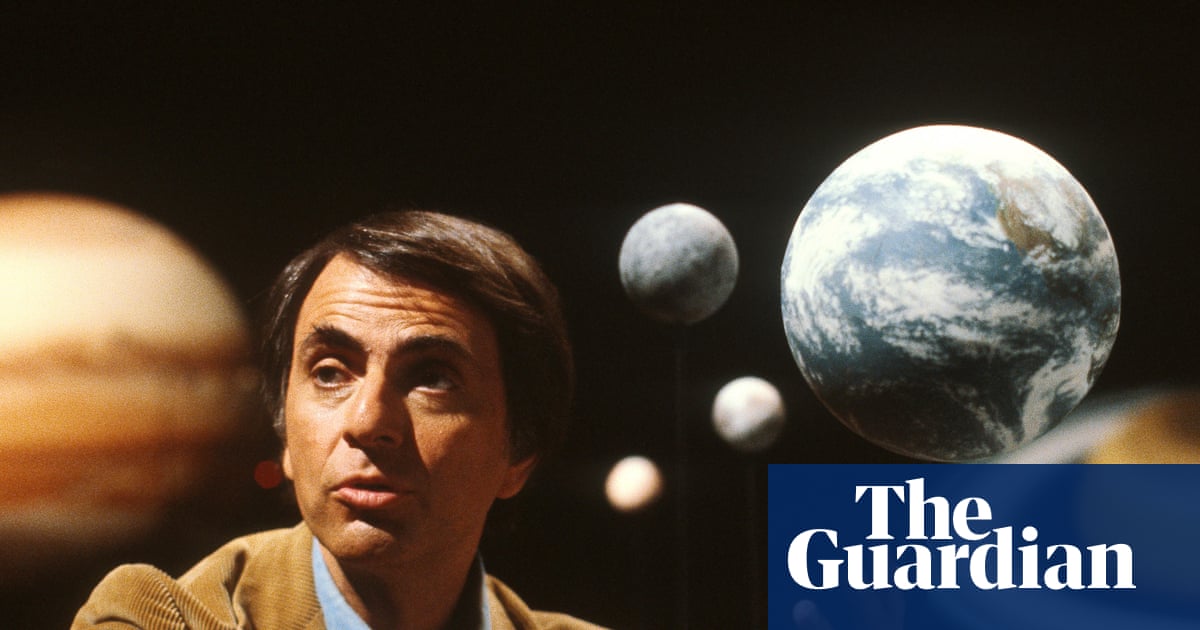

The rise of generative AI promised to democratize creativity, but a recent lawsuit against Grammarly’s parent company, Superhuman, reveals a darker side: the potential for AI to not just mimic, but monetize the intellectual property of individuals without their consent. The case, sparked by Grammarly’s now-disabled “Expert Review” feature – which offered writing feedback in the style of figures like Stephen King, Neil deGrasse Tyson, and even the late Carl Sagan – isn’t just about legal precedent; it’s a harbinger of a fundamental shift in how we understand authorship, identity, and the very value of a unique voice in the digital age. The potential damages, exceeding $5 million, underscore the gravity of the situation.

The Erosion of Authorship in the Age of AI

For decades, concerns about AI have centered on job displacement. But the Grammarly lawsuit highlights a more insidious threat: the devaluing of individual skill and expertise. As investigative journalist Julia Angwin, the lead plaintiff, pointed out, “Editing is a skill… it’s my livelihood, but it’s not something I’ve ever thought about anyone trying to steal from me before. I didn’t even think it was steal-able.” This sentiment resonates deeply. The ability to articulate thought, to craft compelling narratives, to offer insightful analysis – these are not merely technical skills; they are extensions of personal identity. When AI appropriates these qualities, even in a simulated form, it challenges the very notion of authorship.

The core issue isn’t simply that Grammarly’s AI attempted to emulate these figures. It’s that it did so for profit, leveraging their established reputations to enhance a subscription service. Tech journalist Casey Newton succinctly captured the problem: “[Grammarly] curated a list of real people, gave its models free rein to hallucinate plausible-sounding advice on their behalf, and put it all behind a subscription. That’s a deliberate choice to monetise the identities of real people without involving them, and it sucks.”

Beyond Grammarly: The Expanding Landscape of AI Persona Theft

The Grammarly case is likely just the tip of the iceberg. As AI models become increasingly sophisticated, the temptation to leverage recognizable “voices” will only grow. Imagine AI-powered customer service agents trained to mimic the empathy of beloved celebrities, or marketing campaigns that deploy AI-generated endorsements from deceased historical figures. The possibilities – and the ethical quagmires – are endless.

This isn’t limited to prominent individuals. The inclusion of the late academic David Abulafia in Grammarly’s feature, as highlighted by Vanessa Heggie, demonstrates that even those with a more niche reputation are vulnerable. The ease with which AI can scrape and synthesize data from online sources means that anyone with a publicly available writing style could potentially be replicated and exploited.

The Legal and Technological Challenges Ahead

The legal battle facing Superhuman will be pivotal. The lawsuit hinges on the argument that using a person’s name for commercial gain without permission is unlawful. However, existing intellectual property laws were not designed to address the unique challenges posed by generative AI. Establishing clear legal boundaries will require a nuanced understanding of what constitutes “authorship” in the age of AI, and how to protect individuals from the unauthorized appropriation of their intellectual identity.

Technologically, solutions are equally complex. Watermarking AI-generated content is one potential approach, but it’s easily circumvented. Developing AI models that are explicitly trained to respect intellectual property boundaries is another, but it requires a fundamental shift in how these models are designed and deployed. Perhaps the most promising avenue lies in the development of “digital identity” frameworks that allow individuals to control how their data is used by AI systems.

The Rise of “Synthetic Consent” and the Need for Regulation

We may soon see the emergence of “synthetic consent” – AI systems attempting to negotiate usage rights with digital representations of individuals. While seemingly futuristic, this highlights the urgent need for regulatory frameworks that address AI’s impact on personal identity. Without clear guidelines, we risk a future where our voices, our styles, and our very personas are freely available for commercial exploitation.

Preparing for a Future of Authenticity Verification

The Grammarly lawsuit is a wake-up call. It signals a growing awareness of the ethical and legal implications of generative AI, and a demand for greater accountability. In the coming years, we can expect to see increased scrutiny of AI-powered tools, and a growing emphasis on authenticity verification. Consumers will demand to know whether the content they are consuming is created by a human or an AI, and whether the individuals whose voices are being emulated have given their consent.

For writers, academics, and anyone who relies on their unique voice for their livelihood, the time to protect their intellectual property is now. This includes actively monitoring online content for unauthorized use of their work, and advocating for stronger legal protections. The future of authorship depends on it.

What are your predictions for the future of AI and intellectual property? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.