The Royal Equation: How King Charles’ AI Warnings Signal a New Era of Tech Accountability

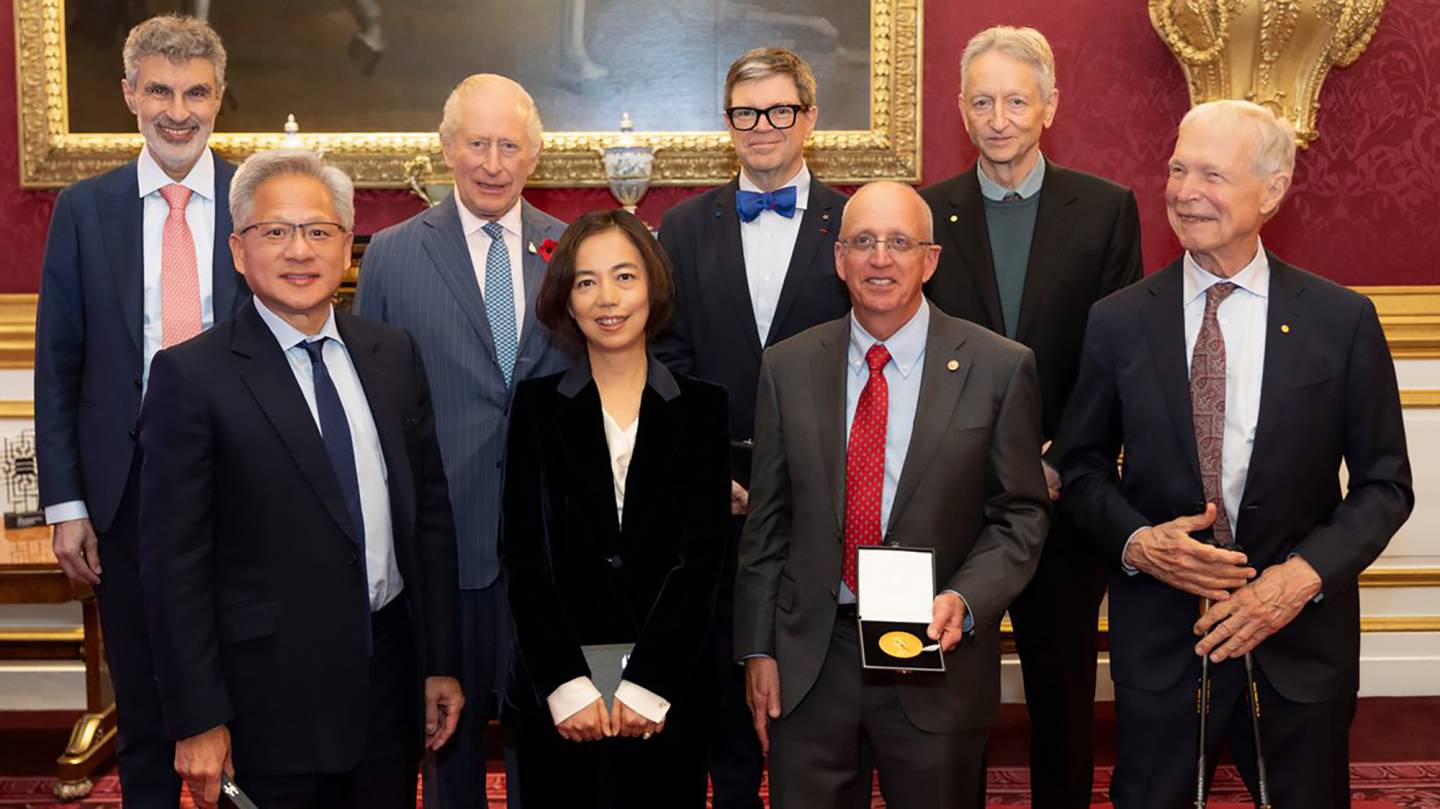

Nearly 60% of global CEOs now cite responsible AI implementation as a top strategic priority, a figure that was barely 20% just two years ago. This seismic shift in corporate consciousness isn’t happening in a vacuum. It’s being actively shaped by a growing chorus of voices – from Nobel laureates to, surprisingly, royalty – demanding a more cautious and ethical approach to artificial intelligence. Recent events, including honors bestowed by King Charles III upon AI pioneers John Hopfield and Fei-Fei Li, coupled with direct conversations between the monarch and tech titans like Nvidia’s Jensen Huang, reveal a pivotal moment: the convergence of power, prestige, and profound concern over the future of AI.

A King’s Cautionary Tale: Beyond the Hype

The reports of King Charles presenting Nvidia CEO Jensen Huang with a copy of his speech warning of the dangers of AI are particularly striking. This wasn’t a casual exchange; it was a deliberate act, signaling the monarchy’s active engagement in the global AI debate. The King’s concerns, echoing those of leading AI researchers, center on the potential for misuse, the exacerbation of societal inequalities, and the erosion of human agency. This isn’t simply about fearing a dystopian future; it’s about proactively shaping a future where AI serves humanity, not the other way around. The meeting, following closely on the heels of Prince Harry and Meghan Markle’s own public statements, underscores a unified front within the Royal Family regarding the need for responsible technological development.

Fei-Fei Li: The ‘Godmother’ Championing Human-Centered AI

The recognition of Fei-Fei Li, often dubbed the “godmother of AI,” alongside John Hopfield, is equally significant. Li’s work has consistently emphasized the importance of building AI systems that are aligned with human values and prioritize fairness, transparency, and accountability. Her assertion that she is “proud to be different” speaks to a broader movement within the AI community – a rejection of the purely technical, profit-driven approach in favor of a more holistic, human-centered one. This is a critical divergence. For too long, AI development has been dominated by a narrow set of perspectives, often lacking diversity and ethical considerations.

The Rise of AI Ethics as a Competitive Advantage

What’s often overlooked is that ethical AI isn’t just a moral imperative; it’s becoming a key competitive advantage. Consumers are increasingly aware of the potential biases and harms associated with AI, and they are actively seeking out companies that prioritize responsible development. Businesses that fail to address these concerns risk reputational damage, regulatory scrutiny, and ultimately, loss of market share. The European Union’s AI Act, for example, is setting a new global standard for AI regulation, and companies operating in Europe will be forced to comply with stringent requirements regarding transparency, accountability, and risk management.

The Future of AI Governance: A Multi-Stakeholder Approach

The involvement of figures like King Charles III highlights the need for a multi-stakeholder approach to AI governance. This isn’t a problem that can be solved by technologists alone. It requires the input of policymakers, ethicists, social scientists, and the public. We’re likely to see a growing role for non-governmental organizations (NGOs) and civil society groups in shaping the AI landscape. Furthermore, the increasing focus on AI safety research – exploring ways to prevent unintended consequences and ensure that AI systems remain under human control – will be crucial. Expect to see increased funding and collaboration in this area, driven by both public and private sector initiatives.

The convergence of these events – royal endorsements, direct dialogues with tech leaders, and a growing public awareness of AI’s potential risks – signals a turning point. The era of unchecked AI development is coming to an end. The future belongs to those who can harness the power of AI responsibly, ethically, and with a clear understanding of its potential impact on society.

Frequently Asked Questions About the Future of AI Governance

What role will governments play in regulating AI?

Governments worldwide are actively developing AI regulations, with the EU leading the way with its AI Act. Expect increased scrutiny, stricter compliance requirements, and a focus on protecting citizens from potential harms.

How can businesses ensure they are developing AI ethically?

Businesses should prioritize transparency, fairness, and accountability in their AI systems. This includes conducting thorough risk assessments, implementing robust data governance practices, and fostering a culture of ethical AI development.

Will AI continue to advance rapidly despite these concerns?

Yes, AI development will likely continue at a rapid pace, but with a greater emphasis on safety and ethical considerations. The focus will shift from simply building more powerful AI to building AI that is aligned with human values and benefits society.

What is the biggest risk associated with unchecked AI development?

The biggest risk is the potential for AI to exacerbate existing societal inequalities, erode human agency, and be used for malicious purposes. Proactive governance and ethical development are crucial to mitigating these risks.

What are your predictions for the future of AI accountability? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.