107

<p>The AI landscape is undergoing a quiet revolution, one not measured in algorithmic breakthroughs but in silicon. While Nvidia dominates the AI hardware market with its GPUs, accounting for an estimated 70% share, the company’s reported $20 billion acquisition of Groq – its largest to date – isn’t about maintaining that dominance. It’s about recognizing the limitations of a one-size-fits-all approach and preparing for a future where <strong>AI inference</strong> demands radically different architectures. This isn’t simply a purchase; it’s a strategic realignment, and a harbinger of a more fragmented, specialized AI hardware ecosystem.</p>

<h2>Beyond GPUs: The Rise of the Language Processing Unit (LPU)</h2>

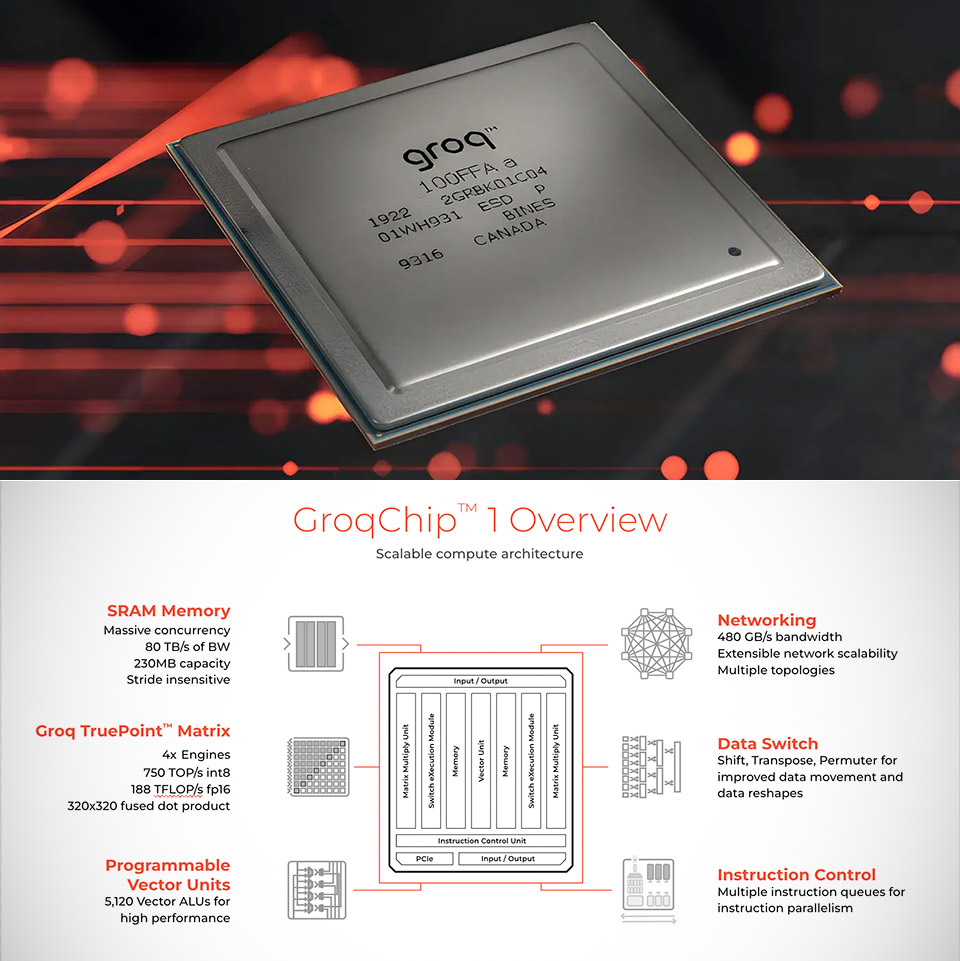

<p>For years, Nvidia has successfully leveraged the parallel processing power of GPUs for both AI training and inference. However, Groq’s technology represents a fundamentally different approach. The startup has pioneered the Language Processing Unit (LPU), a chip designed specifically for the demands of large language models (LLMs) and other AI workloads focused on inference. Unlike GPUs, which are versatile but can be inefficient for specific tasks, LPUs are optimized for speed and predictability in deploying already-trained AI models.</p>

<p>This distinction is crucial. While training AI models requires massive computational power, inference – the process of *using* those models to generate outputs – is increasingly becoming the bottleneck. Applications like real-time translation, chatbot responses, and autonomous vehicle decision-making require incredibly low latency and consistent performance. Groq’s LPU architecture, with its deterministic execution and focus on minimizing data movement, directly addresses these challenges.</p>

<h3>The Deterministic Advantage: Why Predictability Matters</h3>

<p>Traditional AI hardware often suffers from performance variability. Factors like memory access patterns and background processes can introduce unpredictable delays. Groq’s LPU, however, is designed for deterministic execution. This means that for a given input, the output will be generated in a predictable amount of time, every time. This is paramount for applications where timing is critical, such as financial trading algorithms or industrial control systems.</p>

<h2>Implications for Edge Computing and Beyond</h2>

<p>The acquisition has significant implications beyond data centers. The efficiency and low latency of Groq’s technology make it ideally suited for <strong>edge computing</strong> – processing data closer to the source, rather than relying on centralized servers. Imagine a future where autonomous drones can process sensor data and make decisions in real-time, without the need for a constant connection to the cloud. Or smart factories where AI-powered quality control systems can instantly identify defects on the production line.</p>

<p>Nvidia’s move also signals a broader trend: the specialization of AI hardware. We’re likely to see a proliferation of chips designed for specific AI tasks, including image recognition, natural language processing, and robotics. This will lead to increased competition and innovation, ultimately driving down costs and accelerating the adoption of AI across various industries.</p>

<table>

<thead>

<tr>

<th>Feature</th>

<th>GPU</th>

<th>LPU (Groq)</th>

</tr>

</thead>

<tbody>

<tr>

<td>Architecture</td>

<td>Massively Parallel</td>

<td>Deterministic, Single-Core</td>

</tr>

<tr>

<td>Primary Use Case</td>

<td>Training & Inference</td>

<td>Inference (LLMs)</td>

</tr>

<tr>

<td>Latency</td>

<td>Variable</td>

<td>Low & Predictable</td>

</tr>

<tr>

<td>Efficiency</td>

<td>Good (General Purpose)</td>

<td>High (Specialized)</td>

</tr>

</tbody>

</table>

<h2>The Competitive Landscape: A New Era of AI Chipmakers</h2>

<p>Nvidia isn’t the only player recognizing the need for specialized AI hardware. Companies like Cerebras Systems, Graphcore, and SambaNova Systems are also developing alternative architectures. The Groq acquisition will likely intensify competition in this space, forcing other chipmakers to accelerate their own innovation efforts. We can expect to see further consolidation and strategic partnerships as the industry matures.</p>

<p>Furthermore, the move highlights the growing importance of software optimization. Even the most advanced hardware is useless without efficient software tools and frameworks. Nvidia will need to seamlessly integrate Groq’s technology into its existing software ecosystem to fully realize its potential.</p>

<h2>Frequently Asked Questions About AI Hardware Specialization</h2>

<h3>What does this acquisition mean for existing Nvidia GPU users?</h3>

<p>In the short term, very little. Nvidia will likely continue to support and improve its GPU offerings. However, over the long term, we can expect to see Nvidia offering a more diverse portfolio of AI hardware, including LPU-based solutions for specific workloads.</p>

<h3>Will specialized AI chips become more expensive?</h3>

<p>Initially, specialized chips may be more expensive due to lower production volumes. However, as demand increases and manufacturing processes improve, costs are likely to come down. The increased efficiency of these chips could also offset the higher upfront cost.</p>

<h3>How will this impact the development of new AI models?</h3>

<p>The availability of specialized hardware will encourage developers to design AI models that are optimized for specific architectures. This could lead to more efficient and powerful AI applications.</p>

<p>Nvidia’s acquisition of Groq isn’t just a financial transaction; it’s a bold statement about the future of AI. The era of general-purpose AI hardware is giving way to a new age of specialization, where the right chip for the right task will be the key to unlocking the full potential of artificial intelligence. The race is on to build the next generation of AI accelerators, and the implications for industries across the globe are profound.</p>

<p>What are your predictions for the future of specialized AI hardware? Share your insights in the comments below!</p>

<script>

{

"@context": "https://schema.org",

"@type": "NewsArticle",

"headline": "Nvidia’s Groq Acquisition: The Dawn of Specialized AI Hardware",

"datePublished": "2025-06-24T09:06:26Z",

"dateModified": "2025-06-24T09:06:26Z",

"author": {

"@type": "Person",

"name": "Archyworldys Staff"

},

"publisher": {

"@type": "Organization",

"name": "Archyworldys",

"url": "https://www.archyworldys.com"

},

"description": "Nvidia's $20 billion deal for Groq signals a pivotal shift towards specialized AI chips, moving beyond general-purpose GPUs. Explore the implications for AI inference, edge computing, and the future of hardware acceleration."

}

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [

{

"@type": "Question",

"name": "What does this acquisition mean for existing Nvidia GPU users?",

"acceptedAnswer": {

"@type": "Answer",

"text": "In the short term, very little. Nvidia will likely continue to support and improve its GPU offerings. However, over the long term, we can expect to see Nvidia offering a more diverse portfolio of AI hardware, including LPU-based solutions for specific workloads."

}

},

{

"@type": "Question",

"name": "Will specialized AI chips become more expensive?",

"acceptedAnswer": {

"@type": "Answer",

"text": "Initially, specialized chips may be more expensive due to lower production volumes. However, as demand increases and manufacturing processes improve, costs are likely to come down. The increased efficiency of these chips could also offset the higher upfront cost."

}

},

{

"@type": "Question",

"name": "How will this impact the development of new AI models?",

"acceptedAnswer": {

"@type": "Answer",

"text": "The availability of specialized hardware will encourage developers to design AI models that are optimized for specific architectures. This could lead to more efficient and powerful AI applications."

}

}

]

}

</script>Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.