The Tortora Case & The Erosion of Due Process: A Warning for the Age of Algorithmic Justice

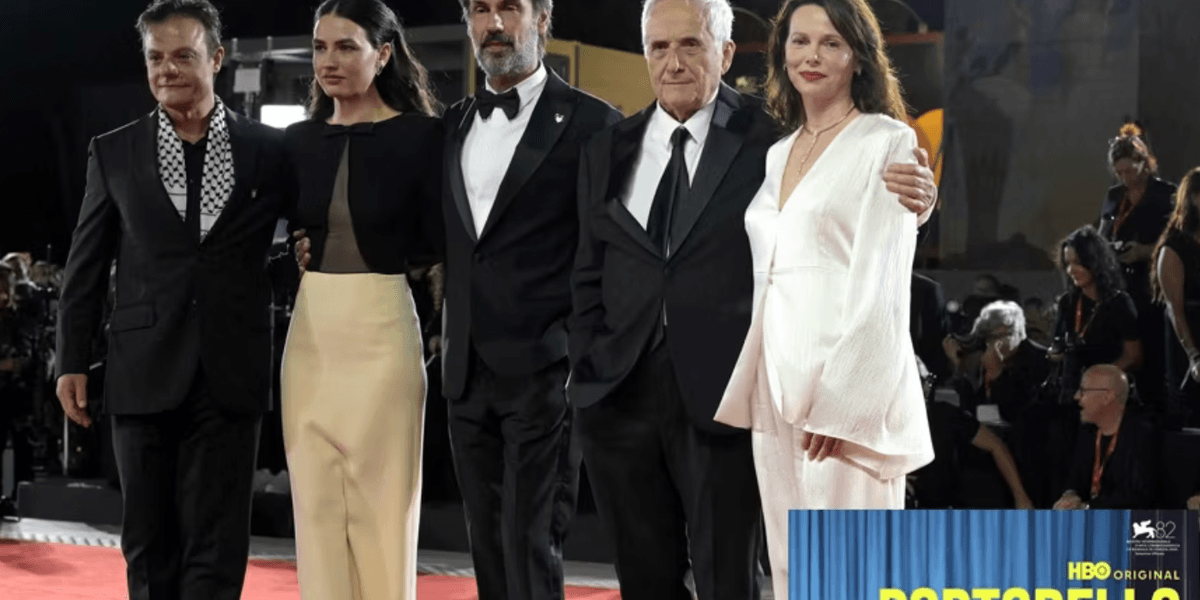

In Italy, a chilling echo of wrongful conviction reverberates today with the release of Marco Bellocchio’s HBO Max miniseries, “Portobello.” The series meticulously reconstructs the devastating case of Enzo Tortora, a popular television personality unjustly accused of ties to the Camorra, the Neapolitan mafia. But beyond a compelling true-crime drama, “Portobello” serves as a stark premonition: as algorithmic decision-making increasingly permeates our justice systems, the vulnerabilities exposed in Tortora’s ordeal – the power of unchecked accusations, the rush to judgment fueled by media frenzy, and the fragility of due process – are poised to be amplified, not diminished. **Due process** itself is becoming a precarious concept in a world demanding instant results.

The Anatomy of a Miscarriage of Justice

The Tortora case, unfolding in the late 1970s and early 1980s, was a perfect storm of circumstantial evidence, biased investigations, and a public eager for scapegoats amidst a climate of fear. Tortora was arrested based on the testimony of a single, unreliable informant, and the ensuing media coverage painted him as guilty before any real evidence surfaced. As detailed in reports from La Verità and Corriere della Sera, Bellocchio’s series doesn’t shy away from portraying the systemic failures – the ambition of prosecutors, the eagerness of journalists, and the willingness of a nation to believe the worst. Gifuni’s portrayal of Tortora, as noted by La Gazzetta dello Sport, is a “spietata” (ruthless) depiction of a man stripped of his reputation and freedom.

From Portobello to Predictive Policing: The Algorithmic Threat

What makes the Tortora case particularly relevant today isn’t simply its historical injustice, but its foreshadowing of the dangers inherent in modern justice systems. We are rapidly moving towards a future where algorithms are used to assess risk, predict recidivism, and even influence sentencing. These algorithms, often trained on biased data, can perpetuate and amplify existing inequalities, leading to disproportionate outcomes for marginalized communities. The rush to embrace “efficiency” in law enforcement, mirroring the haste to condemn Tortora, risks sacrificing fundamental rights at the altar of perceived security.

The Bias Embedded in the Code

The problem isn’t necessarily malicious intent, but rather the inherent limitations of algorithmic systems. As Cathy O’Neil argues in her book Weapons of Math Destruction, algorithms are opinions embedded in code. If the data used to train these algorithms reflects societal biases – and it almost always does – the resulting predictions will inevitably be biased as well. This can lead to a self-fulfilling prophecy, where individuals are unfairly targeted based on flawed predictions, reinforcing the very patterns the algorithms are supposed to identify.

The Erosion of Human Oversight

Furthermore, the increasing reliance on algorithmic decision-making often comes at the expense of human oversight. Judges and parole boards may defer to algorithmic recommendations without fully understanding the underlying assumptions or limitations. This creates a dangerous situation where accountability is diffused, and the potential for error is magnified. The “mediocre and envious” individuals Bellocchio highlights in Wired Italia’s review of the series – those who readily condemned Tortora without due diligence – find a modern analogue in the unquestioning acceptance of algorithmic outputs.

Location as a Reflection of Societal Fault Lines

Interestingly, SiViaggia’s exploration of the filming locations for “Portobello” reveals how the physical spaces themselves – the courtyards, prisons, and television studios – become symbolic representations of the societal structures that enabled Tortora’s persecution. These locations aren’t merely backdrops; they are integral to understanding the context of the case and the forces at play. Similarly, the data centers and server farms that house the algorithms shaping our future justice systems are becoming the new sites of power and control, often hidden from public view.

| Metric | 1980s (Tortora Case) | 2024 (Algorithmic Justice) |

|---|---|---|

| Source of Bias | Informant Testimony, Media Hype | Biased Training Data, Algorithmic Design |

| Speed of Judgment | Rapid Public Condemnation | Instant Risk Assessments |

| Accountability | Diffuse, Limited Oversight | Algorithmic Opacity, Lack of Transparency |

Safeguarding Due Process in the Digital Age

The lessons of “Portobello” are clear: we must be vigilant in protecting due process, even – and especially – in the face of technological advancements. This requires a multi-faceted approach, including greater transparency in algorithmic decision-making, robust oversight mechanisms, and a commitment to addressing the biases embedded in our data. We need to move beyond simply asking whether algorithms *can* improve our justice systems, and instead focus on ensuring that they *do* so in a way that is fair, equitable, and accountable.

Frequently Asked Questions About Algorithmic Justice

What can be done to mitigate bias in algorithmic justice systems?

Several strategies can be employed, including diversifying training data, using fairness-aware algorithms, and implementing regular audits to identify and correct biases. Crucially, human oversight and the ability to appeal algorithmic decisions are essential.

How can we ensure transparency in algorithmic decision-making?

Transparency requires making the underlying code and data used to train algorithms publicly accessible (where possible) and providing clear explanations of how algorithmic decisions are made. This is a complex challenge, but it is essential for building trust and accountability.

Is algorithmic justice inevitable?

Not necessarily. While the trend towards algorithmic decision-making is undeniable, we have the power to shape its trajectory. By prioritizing ethical considerations and investing in robust safeguards, we can harness the potential benefits of technology while mitigating its risks.

The story of Enzo Tortora is a cautionary tale, a reminder that the pursuit of justice must always be tempered with humility, skepticism, and a unwavering commitment to protecting the rights of the accused. As we navigate the complexities of the digital age, we must learn from the past to avoid repeating its mistakes. What are your predictions for the future of algorithmic justice? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.