NVIDIA’s AI Factory: Beyond Hardware, Towards a New Era of Compute

By 2027, the global AI chip market is projected to reach $400 billion. This explosive growth isn’t just about faster processors; it’s about a fundamental shift in how AI is designed, built, and deployed. NVIDIA, currently dominating this space, is betting big on a future where it’s not just a chip vendor, but a complete AI infrastructure provider – an ‘AI Factory’ – and recent announcements signal a potentially pivotal strategic realignment.

The AI Factory Vision: A Vertically Integrated Future

NVIDIA CEO Jensen Huang’s GTC 2026 unveiling of the “AI Factory” concept isn’t simply a marketing slogan. It represents a move towards vertically integrating the entire AI stack, from chip design (like the new Vera CPU) and server architecture (the Kyber rack) to software and even manufacturing processes. This control allows NVIDIA to optimize performance at every level, creating a significant barrier to entry for competitors.

Vera CPU: Challenging the CPU Status Quo

The introduction of the Vera CPU, specifically designed for AI workloads, is a direct challenge to traditional CPU manufacturers. While details remain limited, early indications suggest a performance leap that could redefine the role of the CPU in AI systems. Instead of relying on general-purpose processors, Vera is engineered from the ground up to accelerate AI tasks, potentially unlocking new levels of efficiency and speed. The question isn’t whether Vera is powerful, but whether it can effectively complement NVIDIA’s GPU dominance and create a truly synergistic compute environment.

Rubin and Kyber: A New Server Architecture

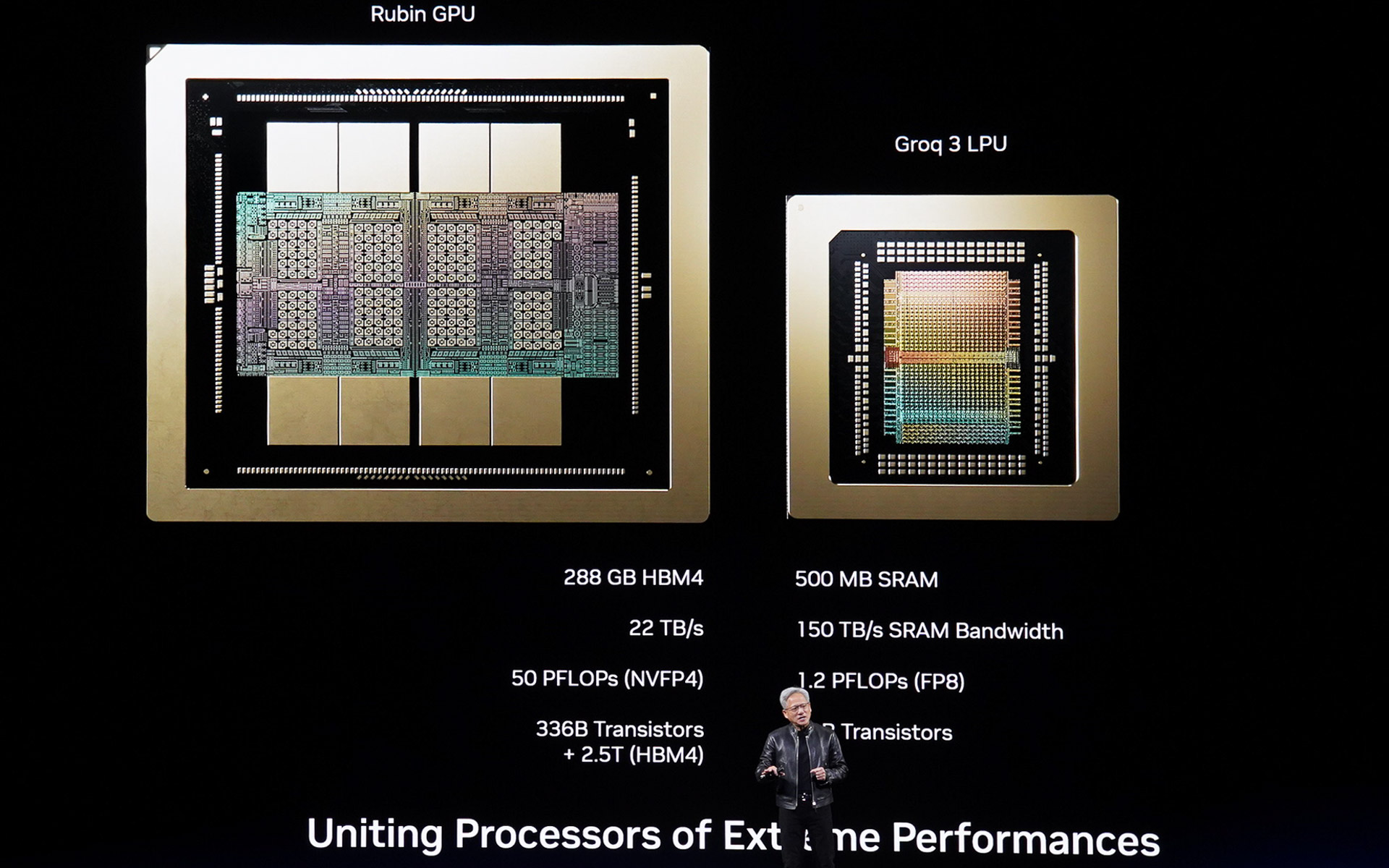

NVIDIA’s Rubin Ultra GPU, coupled with the innovative Kyber server rack, represents a significant departure from conventional server designs. The vertical orientation of components within Kyber isn’t merely an aesthetic choice; it’s a strategic move to maximize cooling efficiency and density, crucial for handling the immense power demands of advanced AI models. This optimized architecture allows for a 35x performance increase, as reported by the Nikkei, solidifying NVIDIA’s lead in high-performance computing.

The Groq Factor: A Strategic Re-evaluation?

While NVIDIA pushes forward with its integrated vision, the company’s relationship with Groq, a specialist in Language Processing Units (LPUs), is becoming increasingly complex. Reports suggest NVIDIA is evaluating whether to fully integrate Groq’s technology or maintain a more arms-length partnership. Groq’s LPU architecture offers a different approach to AI acceleration, focusing on deterministic performance and low latency. This could be a crucial element in NVIDIA’s strategy to address specific AI applications, particularly those requiring real-time responsiveness.

Navigating Antitrust Concerns

NVIDIA’s aggressive expansion and market dominance haven’t gone unnoticed by regulators. The company is facing increasing scrutiny regarding potential antitrust violations, particularly concerning its acquisition of Arm (which ultimately fell through) and its control over key AI technologies. NVIDIA’s recent moves, including the development of its own CPU and server architecture, can be interpreted as a proactive attempt to mitigate these concerns by demonstrating its commitment to innovation and competition, rather than relying solely on acquisitions.

The Future of AI Compute: Specialization and Integration

The trend towards specialized AI hardware, exemplified by NVIDIA’s Vera CPU and Groq’s LPU, is likely to accelerate. General-purpose processors will continue to play a role, but increasingly, AI workloads will be offloaded to dedicated accelerators optimized for specific tasks. However, the real game-changer will be the seamless integration of these diverse components into a cohesive, vertically integrated system – the ‘AI Factory’ model. This integration will require sophisticated software and orchestration tools, further solidifying NVIDIA’s advantage.

Frequently Asked Questions About the Future of AI Compute

What impact will NVIDIA’s Vera CPU have on the broader CPU market?

The Vera CPU is unlikely to completely displace traditional CPUs, but it will force competitors to innovate and develop more AI-focused processors. We can expect to see increased competition in the CPU space, with a greater emphasis on AI acceleration capabilities.

How will NVIDIA’s ‘AI Factory’ strategy affect smaller AI startups?

The ‘AI Factory’ model could create challenges for smaller startups, as it raises the barrier to entry for building and deploying AI systems. However, it also presents opportunities for collaboration and specialization, allowing startups to focus on niche applications and leverage NVIDIA’s infrastructure.

What role will open-source AI frameworks play in this evolving landscape?

Open-source frameworks like TensorFlow and PyTorch will remain crucial for AI development, providing flexibility and fostering innovation. NVIDIA will likely continue to support these frameworks, while also developing its own proprietary tools and libraries to optimize performance on its hardware.

The future of AI compute isn’t just about building faster chips; it’s about creating a holistic ecosystem that empowers developers and accelerates innovation. NVIDIA’s ‘AI Factory’ vision represents a bold step towards this future, and its success will depend on its ability to navigate the complex challenges of technological innovation, market competition, and regulatory scrutiny. What are your predictions for the evolution of AI hardware and infrastructure? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.