The VRAM Revolution: How Neural Texture Compression & DLSS are Redefining PC Gaming’s Limits

The average AAA game now demands over 12GB of VRAM at 1440p, a figure that’s skyrocketing with each new release. This isn’t just a performance bottleneck; it’s becoming a barrier to entry for many gamers. But a confluence of technologies – NVIDIA’s Neural Texture Compression and the evolving DLSS suite – promises to fundamentally alter this landscape, potentially unlocking unprecedented visual fidelity and performance even on mid-range hardware. This isn’t simply about incremental improvements; it’s about a paradigm shift in how games are rendered and delivered.

The VRAM Crunch: Why We’re Running Out of Memory

For years, GPU manufacturers have focused on increasing VRAM capacity, but this approach is hitting diminishing returns. VRAM is expensive, and simply adding more doesn’t address the underlying issue: the sheer size of textures in modern games. High-resolution textures are crucial for visual realism, but they consume vast amounts of memory. This is where NVIDIA’s Neural Texture Compression steps in, offering a potentially game-changing solution.

How Neural Texture Compression Works

Unlike traditional texture compression methods that often result in noticeable visual artifacts, Neural Texture Compression leverages AI to intelligently compress textures *without* significant quality loss. It essentially learns the patterns within textures and creates a more efficient representation, reducing VRAM usage by up to 33% in early tests. This allows developers to either increase texture resolution for improved visuals or maintain existing resolutions while freeing up VRAM for other tasks, like higher ray tracing settings or more complex game logic.

DLSS 5: Beyond Upscaling – A Rocky Road to Real-Time Ray Reconstruction

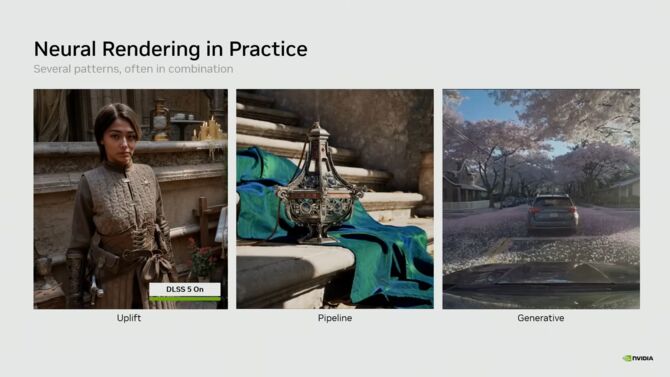

While Neural Texture Compression tackles the memory side of the equation, NVIDIA’s Deep Learning Super Sampling (DLSS) addresses the performance challenge. DLSS 5, despite recent controversy and initial criticisms, represents a significant leap forward in AI-powered rendering. The core of DLSS 5 lies in its frame generation and, crucially, its attempt at real-time ray reconstruction. The debate surrounding its initial implementation, fueled by concerns from developers like the creator of Minecraft, highlights the complexities of integrating AI into game engines.

The Minecraft Controversy & The Myth of New Artifacts

The criticism leveled by the Minecraft developer centered around perceived visual artifacts introduced by DLSS 5. However, as ITHardware.pl and others have pointed out, many of these artifacts were already present in games, albeit less noticeable. DLSS 5, by magnifying the image and interpolating frames, simply makes these existing imperfections more visible. This isn’t necessarily a flaw of the technology itself, but rather a consequence of pushing the boundaries of real-time rendering. The focus should be on refining the AI models and providing developers with better tools to mitigate these issues.

Dynamic-MFG x6: A Glimpse into the Future?

PurePCTesty’s exploration of DLSS Dynamic-MFG x6 demonstrates the potential of frame generation to dramatically increase frame rates. While the implementation is currently experimental, it suggests a future where even modest GPUs can deliver smooth, high-fidelity gaming experiences. However, the latency implications of generating multiple frames remain a critical concern, and NVIDIA will need to continue optimizing the technology to minimize input lag.

| Technology | Primary Benefit | Potential Impact |

|---|---|---|

| Neural Texture Compression | Reduced VRAM Usage | Higher Texture Resolutions, Improved Ray Tracing Performance |

| DLSS 5 | Increased Frame Rates | Playable High-Fidelity Gaming on Mid-Range Hardware |

The Convergence: A New Era of Accessible Fidelity

The true power of these technologies lies in their synergy. Neural Texture Compression reduces the memory footprint, allowing for higher-quality textures and more complex scenes. DLSS 5 then leverages AI to render these scenes efficiently, delivering smooth frame rates without sacrificing visual fidelity. This combination has the potential to democratize high-end gaming, making it accessible to a wider audience.

Frequently Asked Questions About the Future of AI-Powered Rendering

What are the biggest challenges facing DLSS 5?

Latency remains the primary concern. Generating frames introduces inherent delays, and minimizing input lag is crucial for a responsive gaming experience. Further refinement of the AI models to reduce artifacts is also essential.

Will Neural Texture Compression become standard in all games?

It’s likely. The benefits are significant, and the performance impact is minimal. However, adoption will depend on developer integration and optimization.

How will these technologies impact GPU hardware requirements in the future?

They could potentially slow down the relentless demand for ever-increasing VRAM capacity. Instead, the focus might shift towards more powerful AI processing capabilities within GPUs.

Is frame generation a “cheat” or a legitimate performance enhancement?

It’s a legitimate technique, albeit one with trade-offs. While it doesn’t magically create performance, it effectively increases perceived frame rates, providing a smoother gaming experience. Transparency about its implementation is key.

The future of PC gaming isn’t just about raw horsepower; it’s about intelligent rendering. NVIDIA’s Neural Texture Compression and DLSS 5, despite their initial hurdles, represent a pivotal step towards a future where stunning visuals and smooth performance are no longer exclusive to high-end hardware. The evolution of these technologies will be fascinating to watch, and their impact on the gaming landscape will be profound.

What are your predictions for the future of AI-powered rendering? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.