The Rise of Synthetic Identities: How AI-Powered Impersonation Will Redefine Digital Trust

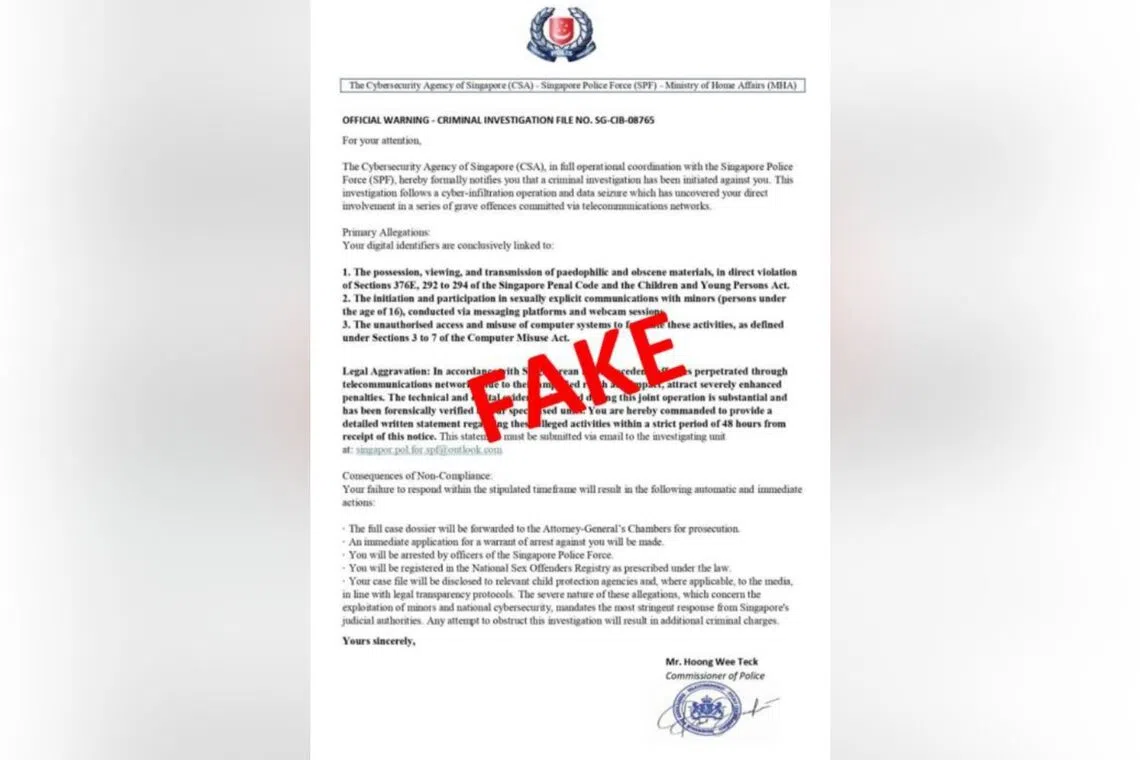

Over $3.2 billion was lost to scams in Singapore in 2023, a staggering 30% increase from the previous year. While many associate scams with phishing emails and romance fraud, a disturbing trend is emerging: increasingly sophisticated impersonation attacks targeting high-profile individuals. Recent warnings from the Singapore Police Force (SPF), the Cyber Security Agency (CSA), and the Ministry of Home Affairs (MHA) regarding scammers impersonating top officials aren’t isolated incidents; they’re a harbinger of a future where verifying identity online becomes exponentially more difficult. This isn’t just about protecting individuals; it’s about safeguarding the foundations of digital trust.

Beyond Copycat Crimes: The Age of Synthetic Identities

The current wave of impersonation scams, as reported by outlets like The Straits Times, AsiaOne, and Malay Mail, relies on relatively simple techniques – spoofed email addresses, convincing social media profiles, and readily available public information. However, these are merely precursors to a far more potent threat: synthetic identities. These aren’t simply stolen identities; they are entirely fabricated personas constructed using a blend of real and artificial data, powered by advancements in artificial intelligence (AI).

How AI Fuels the Impersonation Engine

Generative AI models, capable of creating realistic text, images, and even videos, are dramatically lowering the barrier to entry for sophisticated impersonation. AI can now:

- Generate convincing deepfakes of individuals, making audio and video evidence unreliable.

- Craft highly personalized phishing messages that bypass traditional security filters.

- Automate the creation and management of fake social media profiles with consistent online activity.

- Synthesize realistic personal data – addresses, employment history, even credit scores – to build complete synthetic identities.

This means that scammers no longer need to steal your identity; they can simply *create* one that convincingly mimics a trusted authority figure.

The Expanding Target Landscape: From Individuals to Institutions

While initial reports focus on impersonating police commissioners and government officials, the scope of this threat is rapidly expanding. Expect to see:

- Impersonation of CEOs and CFOs: AI-powered voice cloning could enable scammers to convincingly impersonate company leaders, authorizing fraudulent transactions.

- Fake Experts and Influencers: Synthetic identities will be used to build credibility in niche areas, promoting scams or spreading disinformation.

- Compromised Supply Chains: Impersonating vendors or suppliers could disrupt operations and lead to financial losses.

- Political Manipulation: Deepfakes and synthetic personas could be deployed to influence public opinion and interfere with democratic processes.

The Erosion of Trust in Digital Verification

Current verification methods – passwords, two-factor authentication, even biometric scans – are becoming increasingly vulnerable to AI-powered attacks. As AI gets better at mimicking human behavior and bypassing security measures, the very concept of “digital proof” will be challenged. This necessitates a fundamental shift in how we approach online security.

Building Resilience in the Age of Synthetic Identities

Combating this evolving threat requires a multi-faceted approach:

- Enhanced Authentication: Moving beyond passwords to more robust methods like passwordless authentication and behavioral biometrics.

- AI-Powered Fraud Detection: Leveraging AI to identify anomalies and patterns indicative of synthetic identity fraud.

- Digital Watermarking and Provenance Tracking: Developing technologies to verify the authenticity of digital content.

- Public Awareness Campaigns: Educating the public about the risks of synthetic identities and how to spot potential scams.

- International Collaboration: Sharing intelligence and coordinating efforts to disrupt cross-border fraud networks.

Furthermore, organizations must prioritize robust internal controls and employee training to prevent successful impersonation attacks. A culture of skepticism and verification is paramount.

Frequently Asked Questions About Synthetic Identities

What is the biggest risk posed by synthetic identities?

The biggest risk is the erosion of trust in digital interactions. As it becomes harder to distinguish between real and fake identities, the entire digital ecosystem becomes more vulnerable to fraud and manipulation.

How can I protect myself from impersonation scams?

Be extremely cautious of unsolicited communications, especially those requesting personal information or financial transactions. Verify the identity of the sender through independent channels, and never rely solely on digital evidence.

Will current security measures be enough to combat this threat?

No, current security measures are insufficient. A fundamental shift towards more robust authentication methods and AI-powered fraud detection is necessary.

The rise of synthetic identities isn’t a distant threat; it’s happening now. The recent scams in Singapore are a wake-up call, signaling the need for proactive measures to safeguard our digital future. Ignoring this trend will leave individuals and organizations increasingly vulnerable to a new era of sophisticated and pervasive fraud.

What are your predictions for the future of digital identity verification? Share your insights in the comments below!

Discover more from Archyworldys

Subscribe to get the latest posts sent to your email.